Building a production agent system in 2026 means making a series of architectural decisions that compound. Each choice — how you model state, how you route tool calls, how you handle interruption and resumption — either opens doors or closes them three months later. This post is a technical walkthrough of the decisions we made building Convilyn's Goal Lane agent infrastructure, written for engineers who are thinking through the same problems.

To ground the discussion, we also studied how two of the most interesting open agent codebases — Anthropic's Claude Code and OpenAI's Codex CLI — solve the same problems. They make different tradeoffs in ways that are instructive. Links to the relevant open-source sections are included throughout.

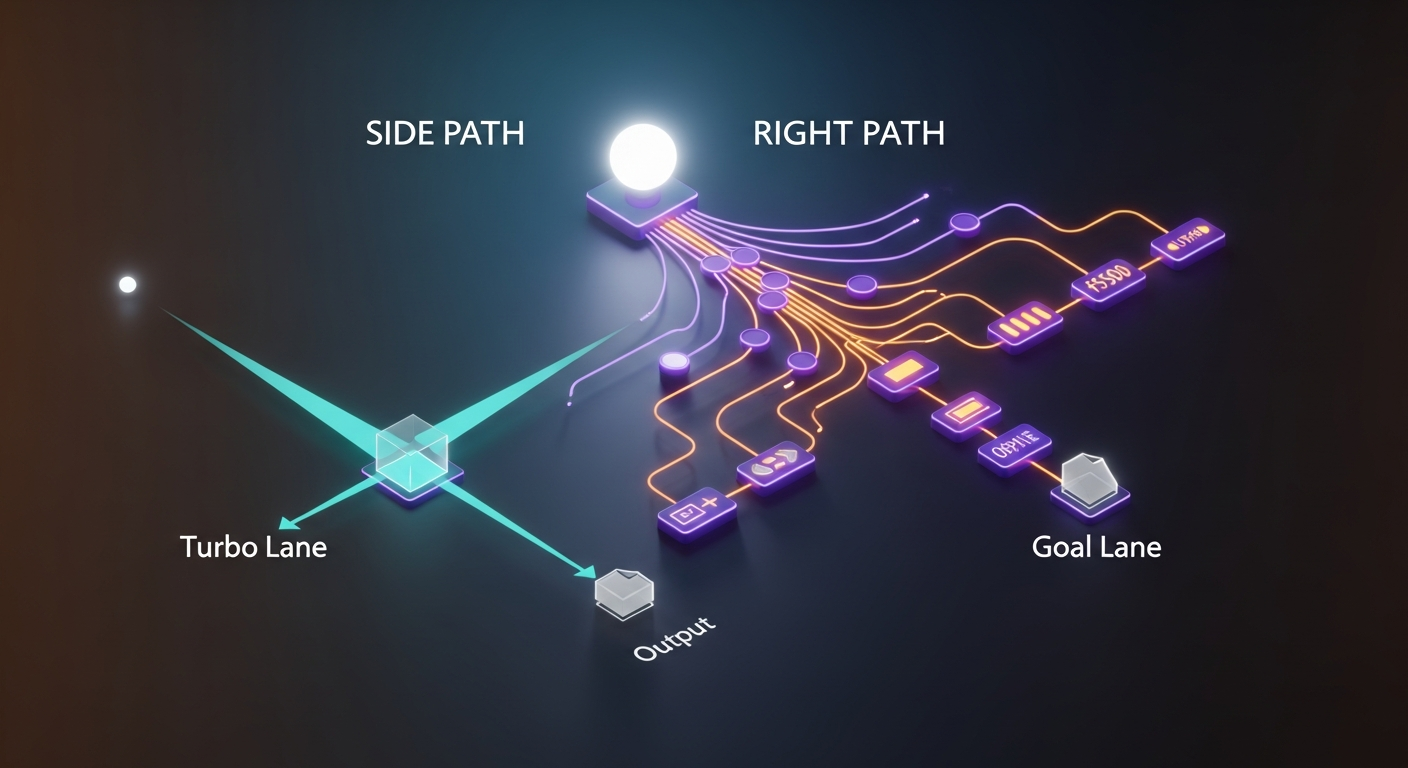

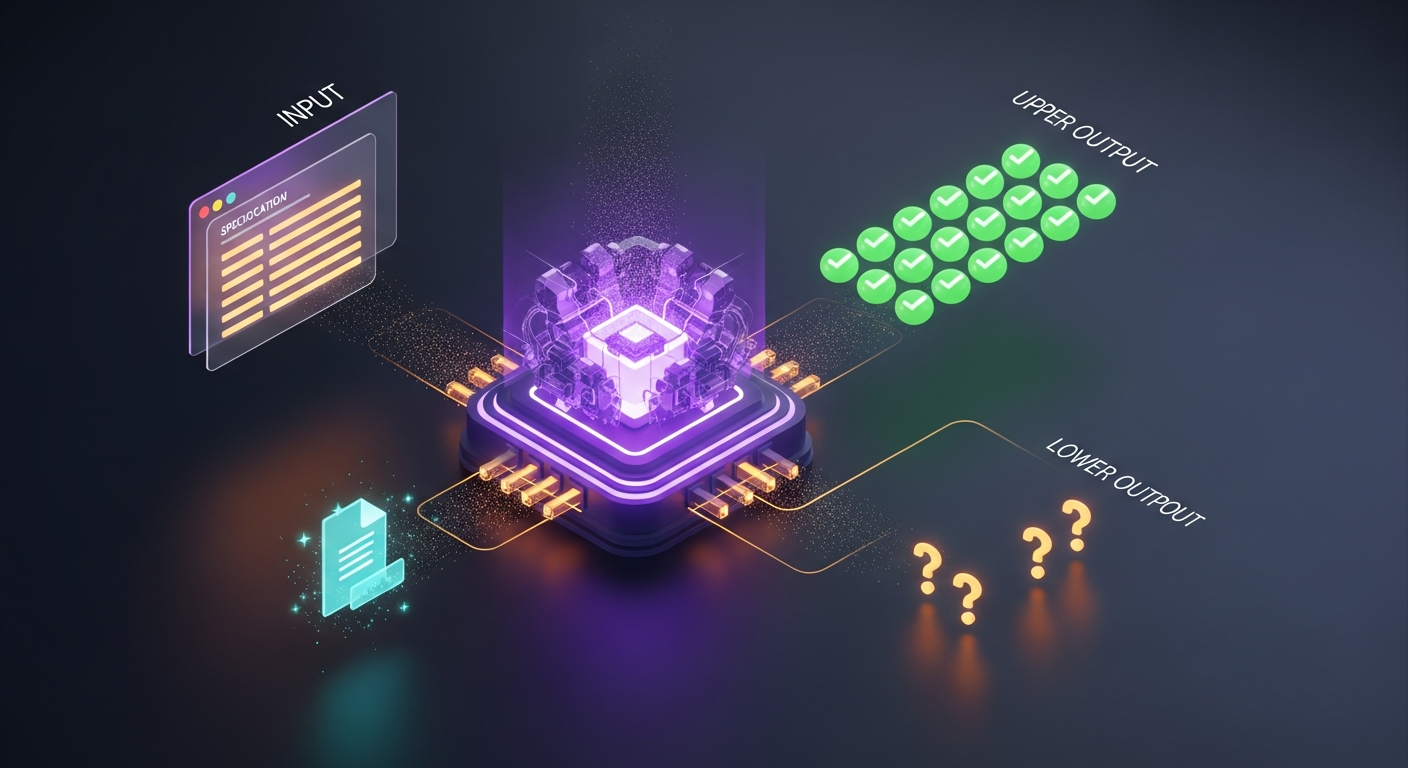

Two Lanes, One Decision

The single most important architectural decision we made early was to split the platform into two fundamentally different processing modes rather than building one unified pipeline.

Turbo Lane handles format conversions: a PNG becomes a WebP, a PDF becomes a DOCX. The input and output formats are known upfront. Processing is deterministic. There is no need for an LLM. The system converts the file, stores the result, and returns a download link. Fast, cheap, predictable.

Goal Lane handles goals: "turn my resume into a job application package," "subtitle this video in three languages," "analyze this ad creative for virality signals." These require understanding what the user actually wants, collecting missing information interactively, invoking a sequence of AI tools, and assembling output that serves the stated goal. Slow, expensive, deeply different.

The discipline the split enforces is as important as the technical boundary. A Turbo Lane job can never accidentally accumulate LLM latency. A Goal Lane job can never be incorrectly billed as a cheap conversion. The boundary forces clarity.

The Agent Loop Problem

Every agent system has an agent loop — the cycle that decides what to do next, executes it, observes the result, and decides again. The design of this loop determines how the system behaves under failure, how it handles long tasks, and how easy it is to reason about from the outside.

Studying the open-source implementations of Claude Code and Codex CLI reveals that even experienced teams make significantly different choices here.

Claude Code: The Single-Threaded While-Loop

Claude Code's core loop (visible in the open-source repository) is surprisingly simple: execute as long as the model returns tool calls; stop when it returns plain text. One flat message history, no threading, no parallel state. The loop body handles one tool call at a time.

# Conceptual reconstruction — not actual source

while response contains tool_calls:

for each tool_call in response.tool_calls:

result = execute(tool_call)

append result to message history

response = call_model(message_history)

return response.textThe elegance is the reliability. Because there is exactly one history and one execution path, the system's state at any moment is fully described by that history. Debugging means reading the message list. Interruption means appending a human message. There is no graph to reason about, no state machine to inspect.

The tradeoff is that everything is sequential. Claude Code compensates by letting users spawn subagents — isolated child conversations with their own context windows — and coordinate them via tmux panes. Parallelism is a layer above the loop, not inside it.

For engineers wanting to dig further: the core loop implementation and the Task tool that spawns subagents are the two files worth reading in the Claude Code source.

Codex CLI: The Five-Phase State Machine

OpenAI's Codex CLI (rewritten in Rust, open-sourced in April 2025) structures each turn as five explicit phases: prompt assembly, inference, response handling, tool execution, and assistant message. One "user turn" can involve dozens of model-tool iterations internally.

// Conceptual reconstruction — not actual source

fn agent_turn(input: UserInput) -> AssistantMessage {

loop {

let prompt = assemble_prompt(input, history, tools);

let response = stream_inference(prompt);

match response {

ToolCall(calls) => {

let results = execute_tools(calls); // sandboxed

history.append(results);

continue; // loop again

}

Text(message) => return message, // done

}

}

}What makes Codex interesting is the security model wrapping the loop. Tool execution happens inside a kernel-level sandbox (Landlock on Linux, Seatbelt on macOS). MCP tools are explicitly outside the sandbox — the agent trusts their results but cannot control their access. This is a clear statement about trust boundaries: the framework guarantees containment for built-in tools but delegates responsibility to MCP server authors.

Codex also makes the loop stateless by design. Rather than using OpenAI's previous_response_id parameter (which would let the server maintain conversation state), Codex sends the full history with every request. The reason is Zero Data Retention compliance: customers who need ZDR cannot have their reasoning stored server-side. Stateless requests work across any OpenAI-compatible endpoint, including local Ollama instances.

For engineers wanting to dig further: the sandbox.rs file in the codex-rs crate and the tool execution routing in codex-cli are the sections worth studying.

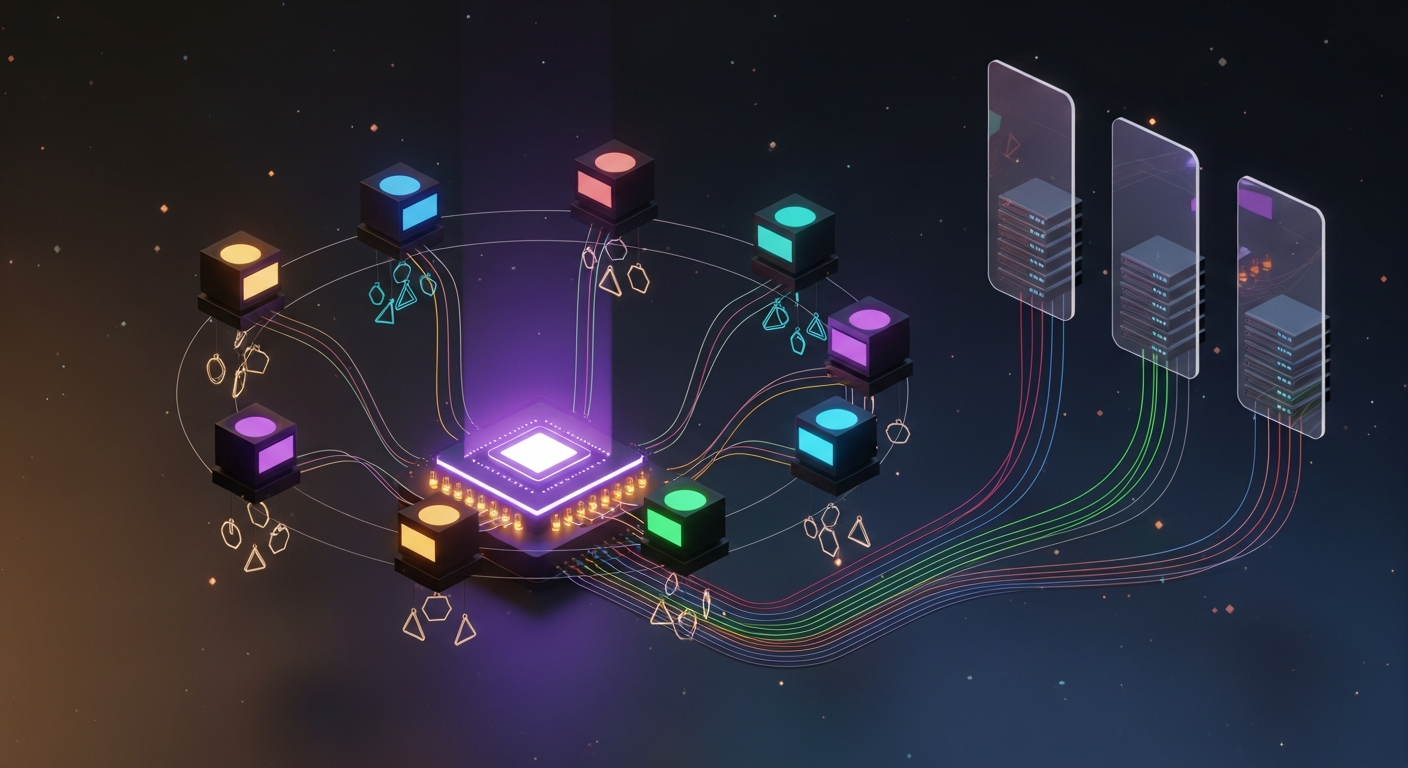

Our Approach: A Compiled Graph

We use LangGraph rather than implementing a custom loop. The difference is that the agent's behavior is defined as a directed graph — nodes for reasoning, tool execution, input collection, and completion — rather than a while-loop. The framework compiles this graph and executes it, handling state transitions according to the edges we define.

The routing decision after each model call is explicit. The model can:

- Call a tool — execution continues with the tool result appended to state

- Request user input — execution pauses at a named checkpoint and waits for a

PATCH /slotscall - Finish — the graph terminates and results are assembled

The graph also has a hard ceiling on iterations. An agent that never reaches a terminal state — due to a confused model or an unexpected tool error — will be stopped at a configurable maximum. This matters in production: runaway agents are expensive and confusing to users.

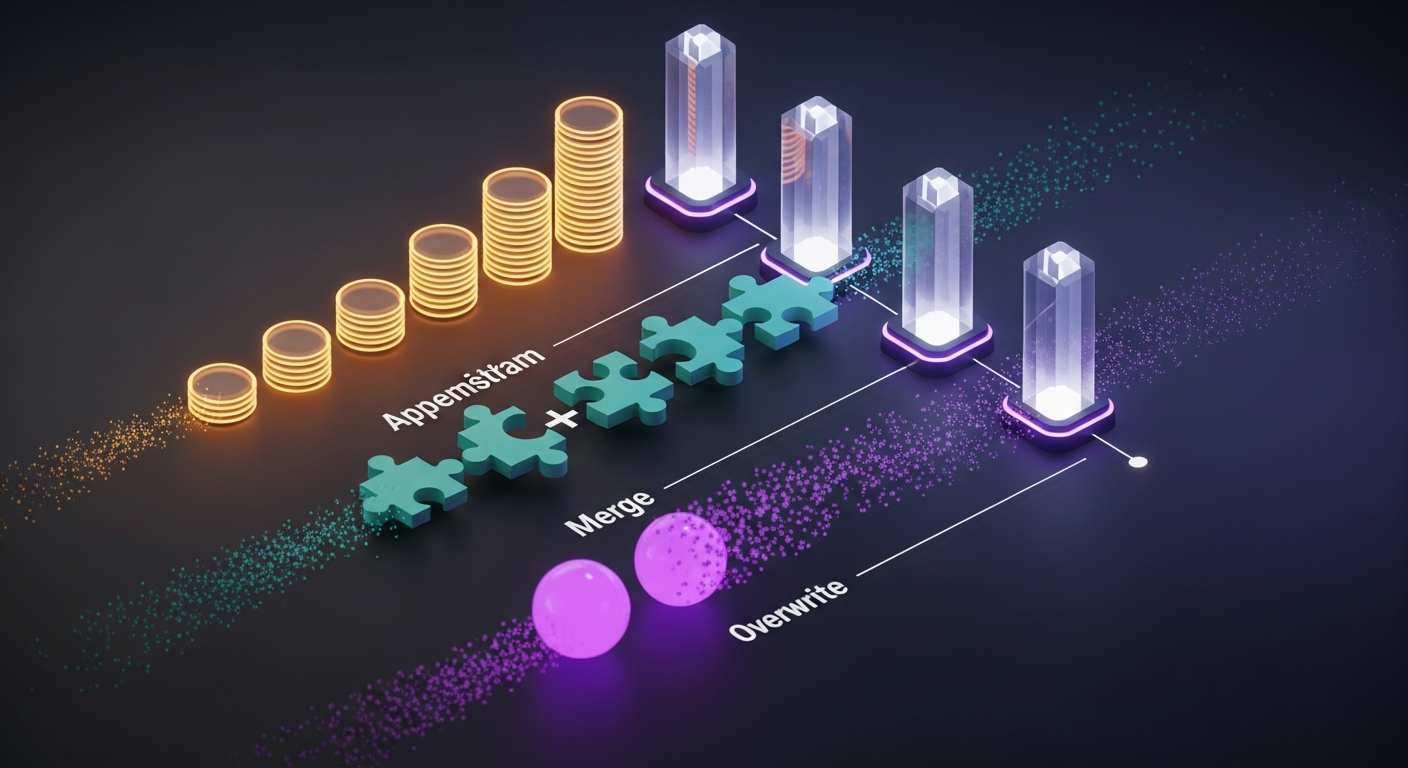

State as a First-Class Citizen

The most consequential LangGraph design decision is how you model state. State flows through every node and edge. Get it wrong and you spend months patching around its limitations.

LangGraph lets you annotate each state field with a reducer — a function that determines how new values merge with existing ones. This sounds like a detail but changes how you think about the agent's execution model.

class AgentState(TypedDict):

# APPEND — conversation history accumulates across turns

messages: Annotated[list, add_messages]

# MERGE — slot values accumulate as they are filled

filled_slots: Annotated[dict, merge_dicts]

# MERGE — tool outputs accumulate as tools execute

intermediate_results: Annotated[dict, merge_dicts]

# OVERWRITE — control flow fields use latest value

iteration_count: int

is_complete: bool

pending_slot_request: dict | NoneThe distinction between append and overwrite fields encodes your mental model of what "progress" means. Conversation history grows — that is how agents remember what happened. Slot values accumulate — that is how the agent collects information progressively. Control flow overwrites — only the current iteration count matters.

A mistake we made early was treating all fields as overwrite. This meant that a node which updated filled_slots would overwrite any slots filled by a previous node, not merge them. The symptom was subtle: the agent would "forget" information it had already gathered when entering a new node. The fix was trivial once we understood the model — switch to a merge reducer. But it took two weeks to diagnose because the failure mode looked like a reasoning error in the LLM, not a state management bug.

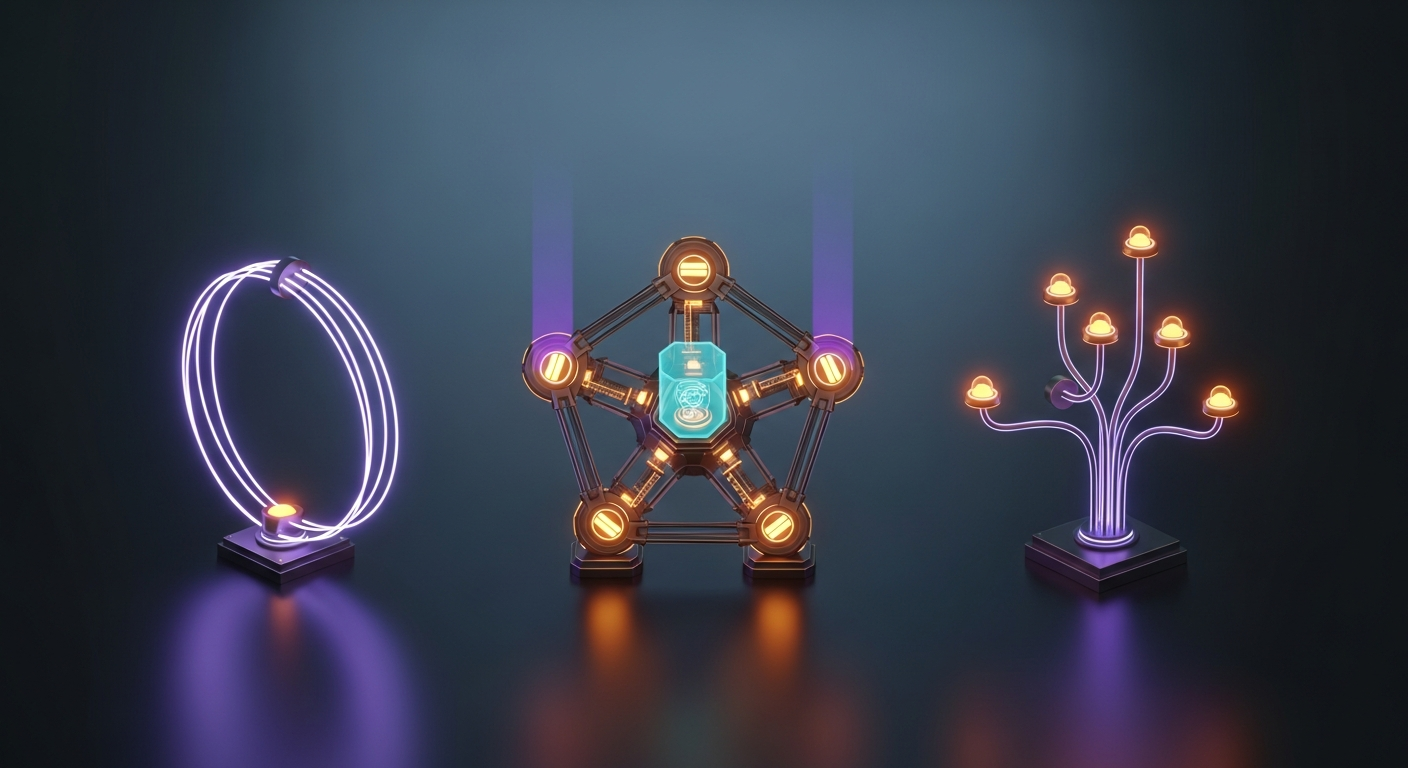

Capability Servers: MCP as a Tool Layer

The agent needs tools to do useful work. We chose to structure those tools as independently deployed capability servers following the Model Context Protocol (MCP) rather than as functions inside the agent process.

MCP defines a standard for tool discovery and invocation: a server registers tools with names, descriptions, and parameter schemas; a client discovers and calls them. The protocol is transport-agnostic (stdio, HTTP, WebSocket). The agent doesn't need to know whether a tool runs locally or on a remote server in another region.

Our current capability server inventory covers eight domains:

resume-profile-mcp Resume parsing, profile extraction, fit scoring

job-search-mcp Job description parsing, search query generation

text-analysis-mcp NLP: keyword extraction, summarization, classification

doc-parser-mcp PDF/DOCX extraction, table detection, layout analysis

media-transform-mcp Document formatting, DOCX generation, PDF rendering

video-subtitle-mcp Audio extraction, transcript generation, subtitle rendering

audit-compliance-mcp Document quality checks, completeness validation

social-intelligence-mcp Ad creative analysis, viral signal scoringEach server provides atomic tools in its domain — small, reusable operations with clear inputs and outputs. Workflows compose them. The same doc-parser-mcp:extract_text call appears in the resume workflow, the contract review workflow, and the lecture notes workflow. The server doesn't know or care which workflow is calling it.

The argument for this decomposition is the same as the argument for microservices, but more concrete here because tool domains have genuinely different operational requirements. Resume parsing is CPU-bound. Video subtitle generation is IO-bound and long-running. Job search calls an external API. If these ran in one process, their scaling requirements would fight each other. As separate servers, each scales independently — and a bug in one cannot crash another.

The interesting engineering challenge MCP introduces is file argument handling. Different tools expect file content in different forms. A document parser needs raw bytes. A text analysis tool needs extracted text. A video processor needs a presigned S3 URL (because the binary is too large to pass inline). We encode this as a per-tool configuration — a strategy that transforms a file reference into the right argument format before each call. The agent passes a file identifier; the framework resolves it to the right representation for each tool without the agent needing to know.

When Agents Aren't the Right Answer

Not every Goal Lane workflow needs an LLM making decisions. Some workflows are fully deterministic: the user uploads a video, selects subtitle language and output format, and the system executes a fixed sequence of operations. There is no ambiguity for the model to resolve, no branching logic that requires intelligence.

For these workflows, we compile the workflow specification into a DAG and execute it directly — no LLM in the critical path. The DAG executor reads step dependencies, constructs a topological ordering, and runs independent steps in parallel within each layer.

# DAG execution: find independent layers, run each in parallel

Layer 1: [extract_audio] # no deps

Layer 2: [generate_transcript] # needs Layer 1

Layer 3: [apply_corrections] # needs Layer 2

Layer 4: [render_burnin, export_srt, export_vtt] # all need Layer 3, independent of each otherThe DAG path is faster and cheaper than the agent path. LLM inference is eliminated. Token costs drop to zero. Latency is determined by the actual processing time of the tools, not by reasoning cycles.

The design question is which workflows get each path. Our heuristic: if a human could write a flowchart for the workflow without any "decide based on content" boxes, it belongs in the DAG path. If the workflow requires reading content and making judgments — which job descriptions best match this resume, which sections of this document need rewriting — it requires the agent path.

Declarative Workflow Specs and the Slot System

Before any workflow executes, the system needs to know what the user wants. Sometimes this is fully specified upfront (user selects from a catalog, fills a form). Often it isn't — the user uploads a video and types "subtitle this," and the system must collect the missing details: what language is spoken, what language to translate to, what output format to produce.

We model this as a slot system. Each workflow specification declares a list of required slots — pieces of information the workflow needs — along with questions to ask if they aren't provided, valid option sets, and conditions that determine when each slot is relevant.

{

"slot_id": "output_language",

"type": "choice",

"question": "What language should the subtitles be in?",

"options": [

{ "value": "en", "label": "English" },

{ "value": "zh", "label": "Traditional Chinese" },

{ "value": "ja", "label": "Japanese" }

],

"condition": {

"slot": "enable_translation",

"equals": true

},

"dynamic_options_from": "supported_languages"

}The condition system means slots only appear when relevant. The language selection slot only appears if the user has indicated they want translation — which itself is a slot with a default of false. The UI never shows irrelevant questions.

Before presenting slots to the user, the system runs an auto-inference pass: for each slot, can the value be determined from the uploaded file's metadata without asking? A video's aspect ratio can usually be detected. A document's language can be guessed from the text. A template's output format follows from the input format. Slots that can be inferred are filled silently; only genuinely ambiguous slots surface to the user.

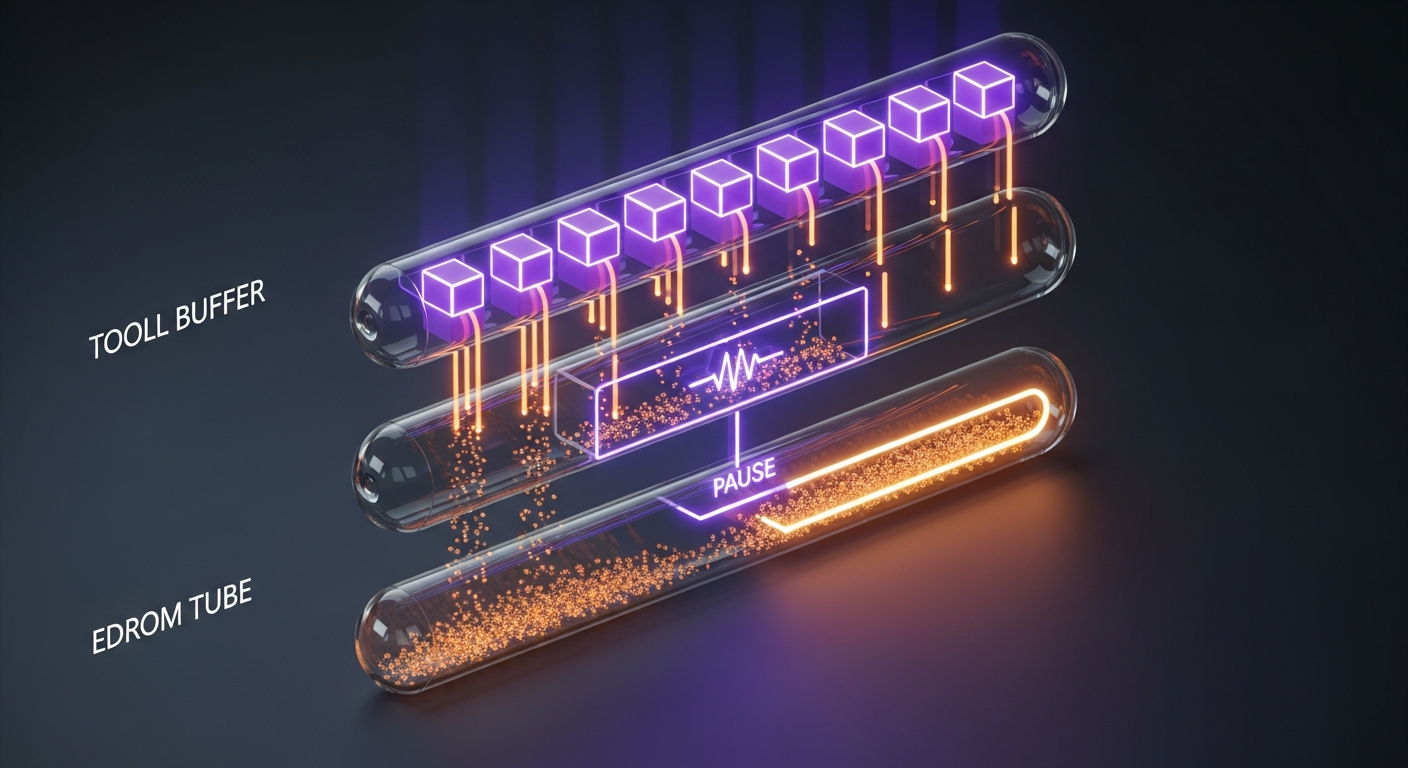

Interactive Checkpoints: Structured Human-in-the-Loop

Some workflows don't just need information at the start — they need to pause mid-execution, show the user intermediate results, and wait for a decision before continuing. We call these interactive checkpoints.

The resume-to-job-ready workflow is the clearest example. Phase 1 analyzes the resume and extracts a structured profile. The user should review and correct this profile before the system uses it to search for jobs — because any error in the profile propagates to every subsequent phase. Phase 2 runs job discovery and scores each posting. The user should select which jobs to target before the system writes tailored materials. Phase 3 generates the final output.

Three phases, two pauses. Each pause is an interactive checkpoint.

# Execution flow with checkpoints

Phase 1: analyze_resume() → extract_profile()

↓

PAUSE: emit slot_needed(id="profile_review", preview=extracted_profile)

↓ (user confirms or edits profile)

Phase 2: search_jobs(profile) → score_job_fit()

↓

PAUSE: emit slot_needed(id="job_selection", preview=ranked_jobs)

↓ (user selects target jobs)

Phase 3: generate_resume_variants() → generate_cover_letters() → package_zip()

↓

COMPLETE: artifacts ready for downloadFrom the frontend's perspective, a checkpoint is a state transition. The job enters a slot_pending state with a specific checkpoint identifier. The UI renders the appropriate review component based on that identifier. When the user submits, the frontend sends a PATCH /slots call with the checkpoint ID and the user's decisions. The backend validates, marks the checkpoint complete, and resumes execution.

The design principle is that the checkpoint is a named, well-typed pause point — not a free-form interruption. The checkpoint ID determines what the user is being asked to review. The frontend can render a rich, domain-specific UI for each checkpoint type rather than a generic form. The profile review checkpoint shows a structured editor for resume fields. The job selection checkpoint shows a ranked list of opportunities with match scores.

Real-Time Visibility: The SSE Architecture

Goal Lane jobs can run for several minutes. Without real-time feedback, users are left watching a spinner with no information about whether anything is actually happening. We use Server-Sent Events (SSE) to stream progress updates from the backend to the frontend throughout execution.

The streaming architecture has several requirements that are in tension with each other:

- Low latency — events should reach the client within milliseconds of being emitted

- Memory safety — a slow client or a disconnected client should not cause unbounded memory growth

- Graceful reconnection — if the client disconnects and reconnects, it should not receive duplicate events

- Clean shutdown — subscribers that are idle or old should be removed without leaking async tasks

The core abstraction is a per-job event queue. When a client connects to the SSE endpoint, a subscriber is created with a bounded async queue attached to that job. Events emitted during execution are pushed into the queue. The SSE response handler reads from the queue and writes events to the HTTP response stream.

# Subscriber lifecycle (pseudocode)

async def subscribe(job_id):

queue = BoundedQueue(max_size=100)

register_subscriber(job_id, queue)

try:

while not shutdown:

event = await queue.get(timeout=15s)

if event is None:

yield keepalive_event() # maintain connection

check_idle_timeout()

continue

yield format_sse(event)

if is_terminal(event):

break

finally:

deregister_subscriber(job_id, queue)The bounded queue is the key memory safety mechanism. If the client is slow and the queue fills, new events are dropped rather than buffered indefinitely. This is a deliberate tradeoff: a slow client receives fewer progress updates but cannot cause the backend to run out of memory. Dropped events are tracked as metrics — a pattern of drops indicates either a performance problem or a networking issue worth investigating.

The event types we emit map to distinct UI states. Progress events update a progress bar. Slot-needed events pause the UI and render a checkpoint. Completed events trigger result display. Failed events show an error with a retry option. Each event type carries enough context for the frontend to render without additional API calls — the SSE stream is the primary data channel during execution, not a notification system that triggers polling.

Connecting this back to the agent systems we studied: both Claude Code and Codex CLI stream their output via SSE. Claude Code streams tool call results mid-thought, creating the impression of "thinking out loud." Codex streams model output tokens in real time. The common pattern is that streaming is not optional in agent UX — the latency of agent inference makes some form of real-time feedback table stakes.

Processing Tiers: Match Resources to Work

Not all Goal Lane jobs have the same resource requirements. Analyzing a two-page resume takes seconds. Generating subtitles for a two-hour video in three languages takes fifteen minutes and may involve gigabytes of intermediate data. Running them on the same worker infrastructure means either over-provisioning for short jobs (expensive) or under-provisioning for large ones (slow).

We route jobs to different worker tiers based on file size at submission time:

def route_to_tier(file_size_bytes: int | None) -> str:

if file_size_bytes is None:

return "standard" # text/form jobs

elif file_size_bytes < 200_000_000:

return "standard" # < 200MB

elif file_size_bytes < 600_000_000:

return "heavy" # 200–600MB

else:

return "ultra" # ≥ 600MBEach tier has its own SQS queue and its own Lambda configuration. Standard workers are sized for common cases: 3 GB memory, 15-minute timeout. Heavy workers handle large documents and medium video: 10 GB, 15 minutes. Ultra workers handle long-form video and bulk processing: 15 GB, 15 minutes. The tiers are independent — heavy jobs cannot starve standard jobs, and an ultra queue backlog doesn't affect the standard queue at all.

Gemma 4 and the Open Model Frontier

Google released Gemma 4 on April 2, 2026 under Apache 2.0 — a significant licensing change from earlier Gemma releases that removes most enterprise legal friction. The architectural decisions in Gemma 4 are worth understanding for anyone building agent infrastructure.

The model family spans four variants: two dense on-device models (E2B at 2.3B effective parameters, E4B at 4.5B) and two large models (a 26B MoE with only 4B active parameters, and a 31B dense model). All variants support 128K+ context. All support tool use and reasoning via their chat template.

Two architectural choices stand out:

Alternating local/global attention. Rather than applying full attention to all context at every layer, Gemma 4 alternates between sliding-window local attention (fast, cheap, covers nearby tokens) and full global attention (expensive, covers all 128K+ tokens). The ratio is roughly 5:1 — five local layers per global layer. This is how the model supports 128K context without the quadratic cost of attending to every token at every layer.

Per-layer embeddings (E2B/E4B only). The small models maintain a second, small embedding table that injects token-specific signals into every decoder layer, not just the first. Rather than front-loading all token information into a single upfront embedding that must survive 24+ transformer layers, each layer receives a fresh reminder of what token it is processing. The engineering tradeoff is interesting: the embeddings live in flash storage (not VRAM), loaded once at initialization. The model pays a startup cost to reduce the per-layer representational burden — a pragmatic optimization for on-device inference where VRAM is the scarce resource.

For agent infrastructure, the E2B/E4B models are particularly interesting. They run fully offline on commodity hardware — phones, Raspberry Pi, NVIDIA Jetson Orin Nano. This opens an architecture where agent tools execute at the edge, with zero network latency and zero API cost. The function calling support is native to the model weights, not prompt engineering — tool definitions are part of the chat template, and the model outputs structured calls that parse cleanly.

# Gemma 4 function calling (chat template)

inputs = processor.apply_chat_template(

messages,

tools=[{"type": "function", "function": {

"name": "extract_profile",

"description": "Extract structured profile from resume text",

"parameters": {

"type": "object",

"properties": {

"text": {"type": "string"},

"target_role": {"type": "string"}

},

"required": ["text"]

}

}}],

enable_thinking=True, # activates chain-of-thought reasoning

return_tensors="pt"

)

# Model output includes a reasoning trace + structured tool callThe reasoning mode (enable_thinking=True) emits an explicit chain-of-thought trace before the final response. For agent workflows where explainability matters, this is valuable: you can log the model's reasoning about which tool to call and why, not just the call itself. The 31B dense variant scores 89.2% on AIME 2026 with thinking enabled, placing it among the top reasoning models available.

We are evaluating Gemma 4 E4B for edge-deployed agents in specific workflow categories where privacy requirements make cloud model calls undesirable. The Apache 2.0 license, the tool use support, and the per-layer embedding architecture make it the most compelling open option we have evaluated.

Seven Lessons from Building This

After running this architecture in production across eight active Goal Lane workflow categories and nearly 100 workflow specifications, we have some hard-won lessons.

1. State design errors look like model errors. When the agent "forgets" something it said two turns ago, the first instinct is to blame the LLM. Often it is a state management bug — a field configured to overwrite when it should merge, or a result that is written to the wrong key. Instrument your state transitions before you blame the model.

2. The workflow spec is the product specification. Every slot definition, every condition, every option set is a product decision. The engineering team cannot write these alone — they require domain knowledge about what users actually need and what edge cases exist. The most useful thing we did was get a non-engineer to read every slot definition in plain English and flag the ones that didn't make sense.

3. Auto-inference is worth the investment. The slot system is only tolerable if users rarely have to answer many questions. Every slot that can be inferred from file metadata is a question that doesn't interrupt the user. The payoff is disproportionate — reducing a five-question flow to two questions changes the UX qualitatively, not quantitatively.

4. Bounded queues are mandatory, not optional. We initially implemented SSE subscribers with unbounded queues "for simplicity." The first time we had a slow client connected to a high-frequency workflow, the queue grew until the process was killed. Bounded queues with drop-on-full semantics are the correct default, not a premature optimization.

5. The DAG path should be your default, not your fallback. We originally designed Goal Lane as agent-first, with DAG execution as an optimization for simple cases. In practice, the majority of our workflows are deterministic enough to run as DAGs. The agent path is the exception — it is for workflows that genuinely require the model to read content and make decisions. Start by asking whether you actually need an agent, not how to build one.

6. Checkpoint IDs are a contract, not an implementation detail. When a checkpoint emits slot_needed(id="profile_review"), the frontend renders a specific UI component based on that ID. Changing the checkpoint ID breaks the frontend silently — the component doesn't render, and the user sees an empty review screen. Checkpoint IDs are part of the API contract. Version them and document them.

7. The permission model is the most important design decision you will delay. Both Claude Code and Codex CLI have sophisticated permission systems — explicit approval modes, scoped tool access, per-command allow/deny lists. We built these concerns in late. The cost was a period where any agent could invoke any tool in any workflow, which was fine in development and terrifying once we had production users. Define your authorization model before you write your first workflow spec.

Where to Look Next

If this post prompted interest in exploring agent infrastructure more deeply, the open-source references are worth your time:

The Claude Code source is organized so that the core agent loop, the tool permission system, and the hook infrastructure are readable in sequence. Start with the main agent loop, then the Task tool that spawns subagents, then the PreToolUse hook system — each section introduces a new layer of the architecture.

The Codex CLI Rust source at github.com/openai/codex rewards reading the sandbox.rs file alongside the tool execution flow. The sandbox design and the decision to make requests stateless (rather than using previous_response_id) are the two most interesting architectural choices — both are clearly explained in the commit history.

For LangGraph, the documentation on state reducers and the examples of interrupt-and-resume (human-in-the-loop) workflows translate directly to production use cases. The framework has matured significantly — the 2024 LangGraph documentation is outdated in ways that will mislead you; use the current docs.

For Gemma 4, the Hugging Face model card and the technical report are both public. The visual guide by Maarten Grootendorst is the most accessible architectural overview we found.

An agent system is not a chatbot with tool calls added. It is a state machine that happens to use an LLM for some of its transitions. The engineering discipline is in designing that state machine — the states, the transitions, the failure modes — before writing a single prompt.

What's Next

Alpha v0.11.0 stabilizes the agent infrastructure described in this post. The next milestone focuses on agent observability: structured traces for every workflow execution, per-step latency tracking, and anomaly detection for agents that are consuming more tokens than expected. We want the agent's reasoning to be as inspectable as a traditional function's execution — a goal that requires building observability into the architecture, not layering it on afterward.

The broader roadmap includes edge-deployed agents for privacy-sensitive categories, multi-agent parallelism for complex multi-document workflows, and deeper MCP ecosystem integration as more capability servers become available in the open-source community.