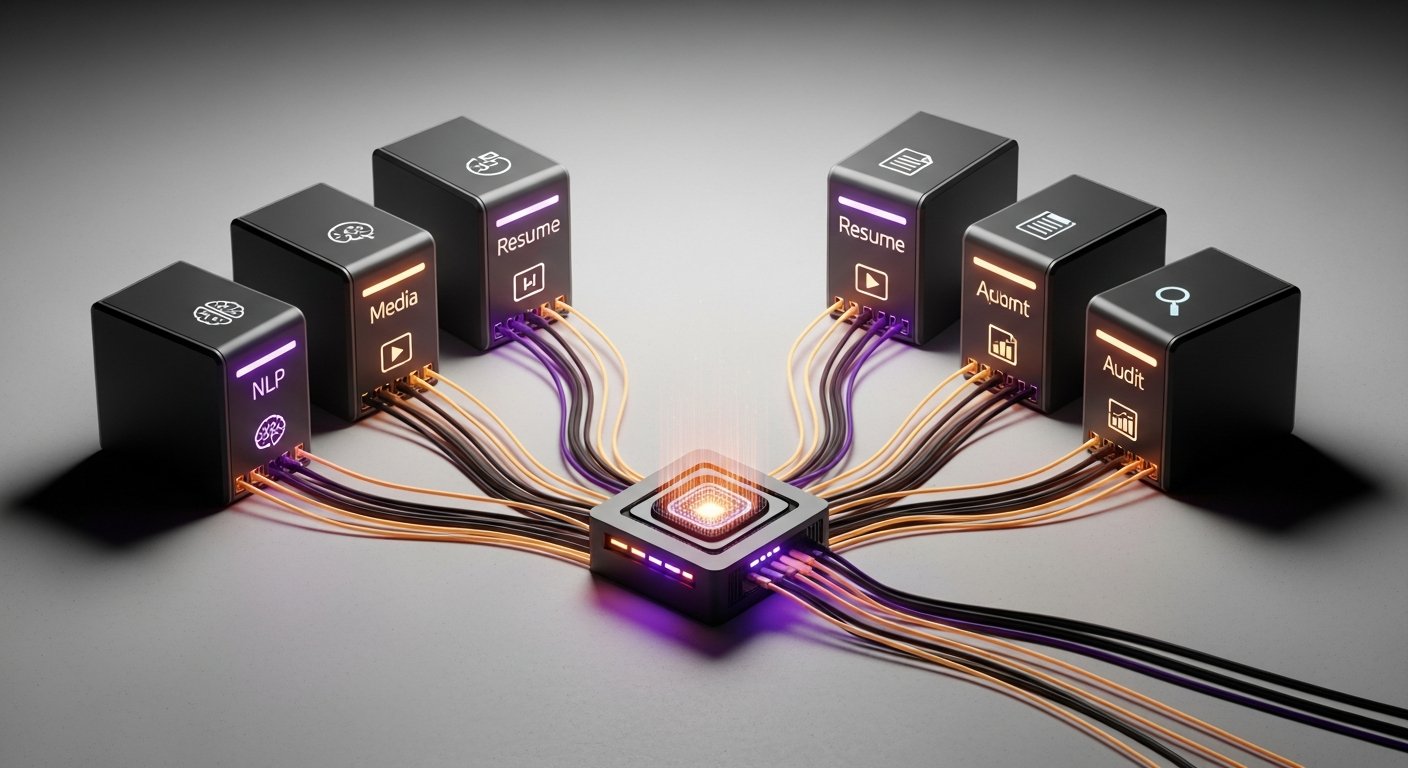

An AI agent without tools is just a chatbot. The tools it has access to define what it can accomplish. In Goal Lane, tools are provided by a set of independently deployed capability servers following the Model Context Protocol (MCP) — a standard for tool discovery and invocation between agents and external services.

Why Capability-Based Servers

The alternative to discrete capability servers is a monolithic tool service — one large backend that handles resume parsing, video processing, NLP, job search, and everything else. We rejected this for three reasons:

Independent scaling. Resume parsing is CPU-bound and needs many instances during peak job submissions. Video subtitle generation is IO-bound and long-running. Packing them together means scaling the CPU-intensive work pays for idle video processors, and vice versa.

Independent deployment. When we improved the resume parser in month 3, we deployed only resume-profile-mcp. No other server needed a restart. Monolith deployments would require restarting the entire tool layer for any change.

Capability isolation. A bug in the job search server cannot crash the document extraction server. Failure domains are the size of one service, not the entire tool layer.

Current MCP Server Inventory

resume-profile-mcp Resume parsing, profile extraction, fit scoring

job-search-mcp Job description parsing, search query generation

text-analysis-mcp NLP: keyword extraction, summarization, classification

doc-parser-mcp PDF/DOCX text extraction, table extraction

media-transform-mcp Document formatting, DOCX generation, PDF conversion

video-subtitle-mcp Subtitle extraction, transcript generation

audit-compliance-mcp Document quality checks, compliance validation

social-intelligence-mcp Ad creative analysis, content viral scoringEach server registers its tools with the MCP gateway at startup. The gateway maintains a live tool catalog. When the agent needs tools for a workflow, it queries the gateway — it never needs hardcoded knowledge of which server provides which tool.

Tool Naming Convention

Tools follow a {server}:{tool_name} naming convention. The colon separator makes routing unambiguous:

resume-profile-mcp:parse_document → parses uploaded resume

resume-profile-mcp:extract_profile → extracts structured profile from parsed text

resume-profile-mcp:score_fit → scores resume against a job description

text-analysis-mcp:extract_keywords → keyword extraction

text-analysis-mcp:summarize_text → summarization

doc-parser-mcp:extract_text → raw text extraction from PDF/DOCXWhen the agent calls resume-profile-mcp:parse_document, the MCP gateway routes the call to resume-profile-mcp and invokes parse_document. The agent doesn't know the server's endpoint or transport protocol.

The Six File Argument Strategies

Each MCP tool has a different expectation for how file content should be passed. A document parser wants raw bytes. A text analysis tool wants extracted text. A video processor wants a presigned URL. We encode these expectations as FileArgStrategy — a per-tool configuration that transforms a file ID into the right argument format before calling the tool.

class FileArgStrategy(str, Enum):

BINARY_BASE64 = "binary_base64"

PLAIN_TEXT_CONTENT = "plain_text_content"

PLAIN_TEXT_TEXT = "plain_text_text"

IMAGE_BASE64 = "image_base64"

VIDEO_S3_PRESIGNED = "video_s3_presigned_url"

PASSTHROUGH = "passthrough"

# Tool → strategy mapping (configured at tool registration)

TOOL_FILE_STRATEGIES = {

"resume-profile-mcp:parse_document": FileArgStrategy.BINARY_BASE64,

"doc-parser-mcp:extract_text": FileArgStrategy.PLAIN_TEXT_CONTENT,

"text-analysis-mcp:classify_text": FileArgStrategy.PLAIN_TEXT_TEXT,

"image-ocr-mcp:extract": FileArgStrategy.IMAGE_BASE64,

"video-subtitle-mcp:create_job": FileArgStrategy.VIDEO_S3_PRESIGNED,

"knowledge:research_domain": FileArgStrategy.PASSTHROUGH,

}async def resolve_file_args(

tool_name: str,

raw_args: dict,

file_storage: FileStorageService,

) -> dict:

strategy = TOOL_FILE_STRATEGIES.get(tool_name, FileArgStrategy.PASSTHROUGH)

file_id = raw_args.get("file_id")

if strategy == FileArgStrategy.BINARY_BASE64:

raw_bytes = await file_storage.download(file_id)

return {**raw_args, "file_data": base64.encode(raw_bytes),

"file_type": detect_mime_type(raw_bytes)}

if strategy == FileArgStrategy.PLAIN_TEXT_CONTENT:

text = await file_storage.get_extracted_text(file_id)

return {**raw_args, "content": text}

if strategy == FileArgStrategy.VIDEO_S3_PRESIGNED:

url = await file_storage.presign_url(file_id, ttl=3600)

return {**raw_args, "input_url": url}

return raw_args # PASSTHROUGH: no transformationThis abstraction means the LLM never sees raw file bytes or presigned URLs in its context. It calls tools with file_id arguments, and the strategy layer handles the rest transparently.

Three Control-Flow Tools

Beyond data tools, every Goal Lane agent has access to three control-flow tools that are provided by the agent itself, not by MCP servers:

request_user_input(slot_type, slot_id, prompt_key, options=[])

→ Pauses graph execution, requests user decision

→ Guardrail: cannot call until ≥2 data tools completed

(prevents agents from asking questions before reading the file)

store_artifact(file_name, content, content_encoding, content_ref_id)

→ Uploads generated content to object storage

→ Three input modes: UTF-8 text, base64 binary, or reference ID

from a previous MCP tool call (avoids re-encoding large files)

complete_workflow(summary, artifacts)

→ Signals workflow completion to the graph router

→ Always runs last: if batched with other tools, reordered to endThe request_user_input guardrail is a hard constraint: the agent cannot ask the user anything until it has called at least two data tools. This ensures the agent analyzes the user's file before asking clarifying questions — preventing the frustrating pattern where an AI agent immediately asks "what do you want to do?" after receiving a document.

Reference IDs and Chained Tool Calls

MCP tools that generate large binary outputs (PDFs, formatted documents) return a reference ID instead of embedding the binary in the message. The agent passes this reference ID to subsequent tools or to store_artifact:

# Tool chain without re-encoding:

resume-profile-mcp:parse_document

→ returns { ref_id: "doc_abc123", page_count: 4 }

resume-profile-mcp:tailor_resume

→ accepts { source_ref_id: "doc_abc123", target_role: "SWE" }

→ returns { ref_id: "tailored_xyz456", format: "pdf" }

store_artifact

→ accepts { content_ref_id: "tailored_xyz456", file_name: "Resume_SWE.pdf" }

→ uploads from source, never passes through agent contextWithout reference IDs, a 200KB PDF would need to be base64-encoded, placed in the LLM's context window, decoded, and re-encoded at every tool hop. Reference IDs keep binary data out of the context window entirely.