An LLM agent platform produces a peculiar kind of telemetry. It's not just request/response with a status code — every workflow emits dozens of nested spans, hundreds of structured events, kilobytes of log lines, and at the end of all that you still need to answer the simple question "did this turn cost more tokens than usual, and if so, which tool call was responsible?" Get observability wrong and the platform becomes opaque. You'll spend more time debugging your debugging than fixing actual product issues.

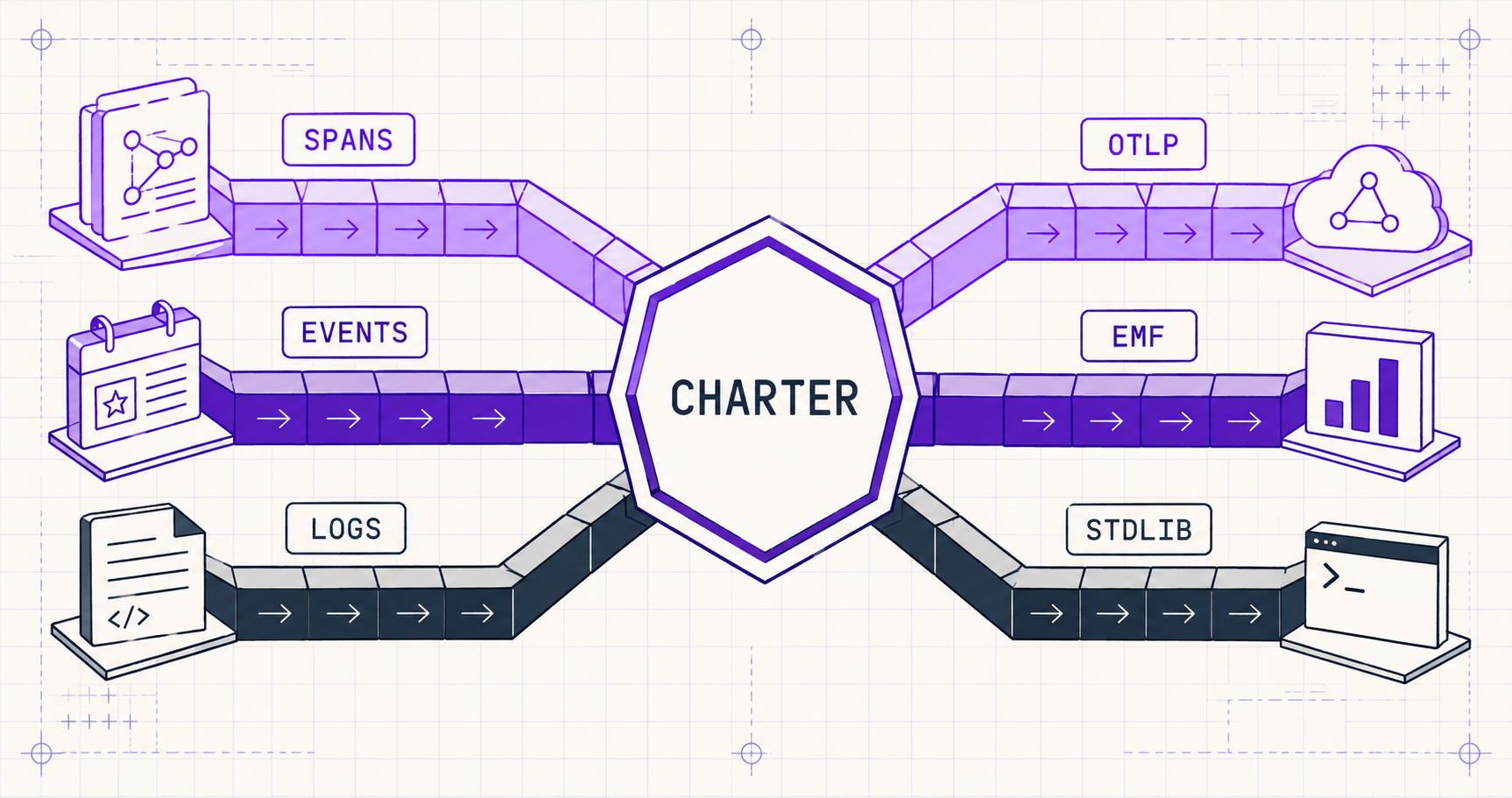

This post is about how Convilyn's observability arrived where it is: one seam for OTel spans, one transport for events, one redaction policy, and one place where trace IDs come from. We didn't get there in a straight line. The first three months of work produced three parallel transports doing the same job, and the next month was spent collapsing them — what we now call the seven-phase observability charter. The goal of this post is to make the charter useful to other teams building agent infrastructure.

For grounding, we leaned on three external bodies of work throughout: OpenTelemetry's Semantic Conventions for Generative AI (the closest thing to a public allowlist standard for LLM telemetry), Anthropic's engineering writing on building effective agents (which makes structured event emission a first-class concern), and Honeycomb's Observability Engineering book — particularly the chapter on attribute cardinality, which is the single most under-appreciated cost driver in production telemetry.

Three Transports Doing One Job

The state of observability at the end of alpha looked like this. We had a span layer using OpenTelemetry, but spans were created in three different places: a context manager in the LangGraph node base class, ad-hoc tracer.start_span calls scattered across orchestrator code, and a Phoenix-flavored using_project helper that wrapped LLM calls. Each path produced spans the others didn't know about.

We had a structured event layer using a LogEvent Pydantic model — fine, except an evaluation team had quietly forked it into EvaluationEvent, and the latency team had forked it again into LagEvent. Three near-identical models with three near-identical sinks. Each owned by one team, each silently drifting from the others.

And we had logs — emitted via stdlib logging in some files, via loguru.logger in others, with a bridge that re-routed loguru into stdlib at startup. The bridge worked, sort of. It also lost structured fields whenever the loguru side used a feature stdlib didn't support.

Each of these transports had been added with good intent. None had been retired when its replacement landed. The result was that asking "show me the events for trace X" required querying three different tables, deduplicating across two trace_id formats, and accepting that 10–15% of events would have a trace_id of 00000000-0000-0000-0000-000000000000 because they were emitted before the request context propagated. We had built three transports doing one job, and none of them did the job well.

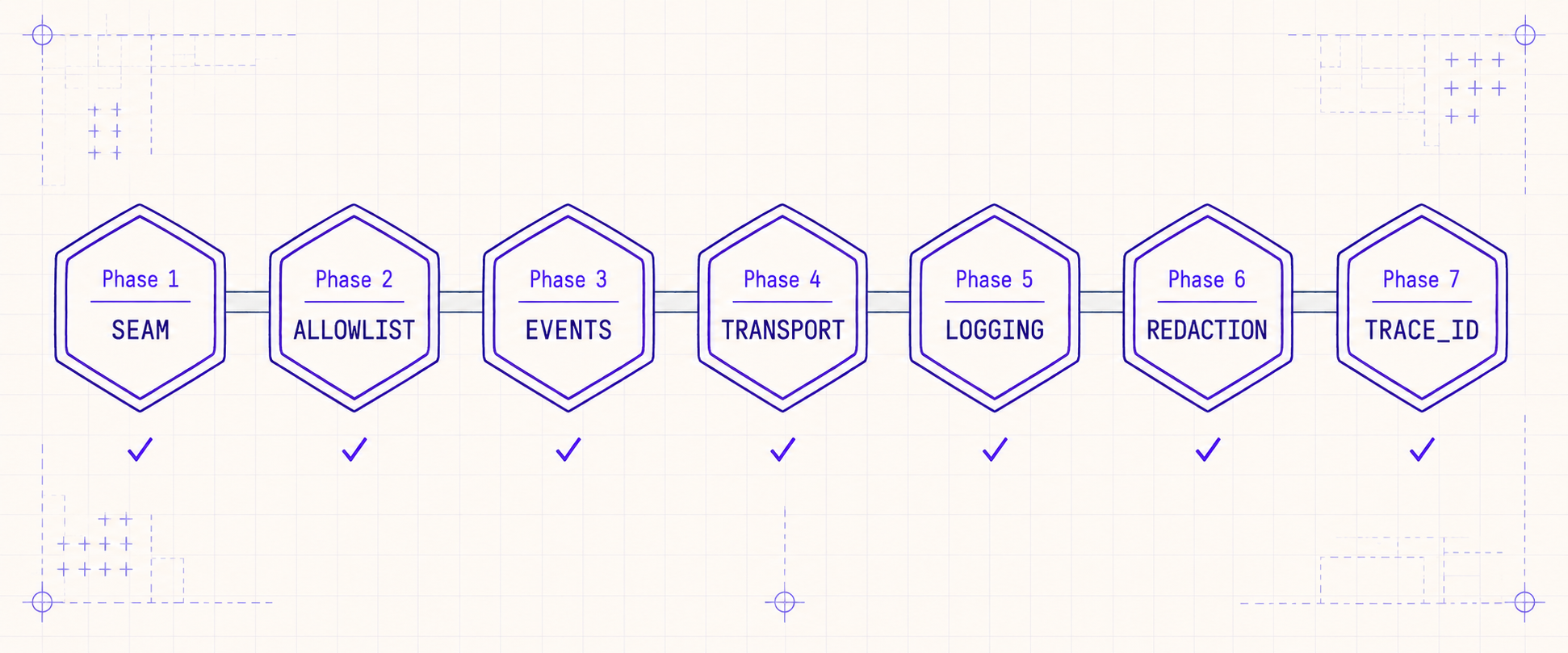

The charter was not a rewrite. The vast majority of code paths already emitted to one of the three transports. The work was to delete two of them, route everything through the survivor, and add governance gates so the parallel paths couldn't grow back. We did this in seven phases, each delivering an independently-shippable improvement, none requiring a coordinated cutover. The order matters — earlier phases unlock later ones — and the rest of this post walks through each phase as we lived it.

The Seven Phases at a Glance

| Phase | Goal | Outcome |

|---|---|---|

| 1 — Seam | One OTel-enrichment seam | record_node_attributes / record_node_event / safe_set_attributes become the only public callers. |

| 2 — Allowlist | Bound the cardinality cost | 72-key ALLOWED_SPAN_ATTRIBUTES + sampling SOP, enforced as a PR-review gate. |

| 3 — Events | Collapse fork into siblings | LogEvent / LagEvent / EvaluationEvent become equal-weight siblings sharing one transport. |

| 4 — Transport | One sink, three categories | log_event() flattens all sibling categories into LogEvent.sanitized_attributes; no parallel pipelines. |

| 5 — Logging | Stdlib logging only | loguru deleted; from loguru import logger ImportErrors; a guard test blocks re-introduction. |

| 6 — Redaction | One policy, applied everywhere | redact_args / summarize_payload / SENSITIVE_KEY_RE are the one redaction surface; agent-side shim retired. |

| 7 — Trace IDs | W3C-canonical, span-derived | current_trace_ids() reads from the active OTel span; uuid4 minting deleted. |

Reading this table will make any team that's done a similar consolidation nod knowingly. Each row looks small. Each took about a week of work plus a week of cleanup. The cumulative effect is that the platform now produces telemetry an on-call engineer can actually use.

Phase 1–2: The Single Seam and the Allowlist

The first move was to declare a single OTel-enrichment seam. Not a new abstraction — we already had attribute-setting helpers — but a discipline: domain code never imports OpenTelemetry directly. Spans are created by the LangGraph node base class. Attributes are set via three named functions:

# app/core/observability.py — the entire public surface

def record_node_attributes(node_name: str, **attrs) -> None:

"""Set attributes on the current span, with allowlist + redaction."""

def record_node_event(name: str, **attrs) -> None:

"""Emit a span event with allowlist + redaction."""

def safe_set_attributes(span, **attrs) -> None:

"""For nodes that already hold a span reference."""

def current_trace_ids() -> tuple[str, str]:

"""W3C-canonical (trace_id_hex, span_id_hex) from the active span."""Anything else — tracer.start_span, span.set_attribute, phoenix.trace.using_project — is removed and forbidden by a unit test that imports the orchestrator package and asserts no module references those symbols. Domain code that wants to add a new span attribute calls record_node_attributes(name, my_key=value) and that's all.

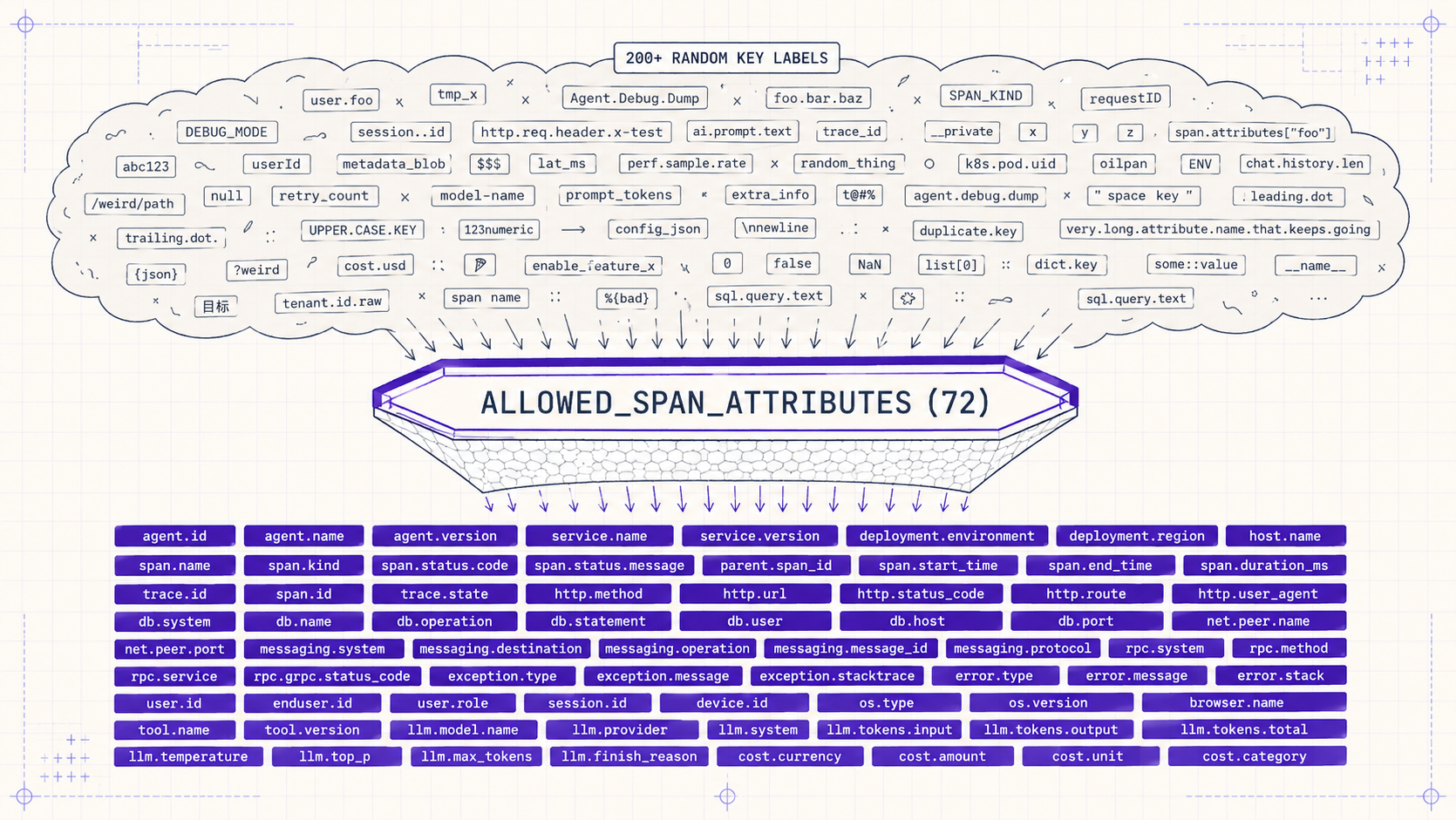

This single seam unlocked the second phase: bounded cardinality. OpenTelemetry's attribute-naming guide warns about high-cardinality keys (user IDs, request bodies, anything with unbounded distinct values), and Honeycomb's book is even blunter: cardinality is what your storage bill is. We added an ALLOWED_SPAN_ATTRIBUTES constant — a 72-key set of permitted keys — and made record_node_attributes drop any key not in the set with a debug log.

OTEL_GOVERNANCE.md, not silently in production.The 72 keys cover the dimensions we want to query on: agent identity (agent.id, agent.kind), tool execution (tool.name, tool.duration_ms, tool.outcome), LLM calls (llm.model, llm.tokens.input, llm.tokens.output, llm.cache.hit), workflow control (workflow.id, workflow.phase, workflow.iteration), and a handful of error/retry flags. Adding a new key is a PR review gate — it must appear in OTEL_GOVERNANCE.md with a one-line justification before the allowlist accepts it. The PR review feels heavyweight for a single key. The discipline pays off: cardinality-driven cost growth went from a recurring quarterly fight to a non-issue.

One subtle decision worth flagging: the seam doesn't validate types or values, only key names. record_node_attributes(tool_duration_ms="seven seconds") will happily attach the string "seven seconds" to the span. The reasoning is that loose types make the seam usable from notebooks and one-off scripts; a type schema would create friction at exactly the moment when an engineer needs to add quick instrumentation. The query layer downstream handles type coercion. This is a deliberate departure from OpenAI's eval cookbook, which encourages stricter Pydantic schemas at every emission point — we just found the schema friction outweighed the benefit at our scale.

Phase 3–4: The Event Sibling Pattern

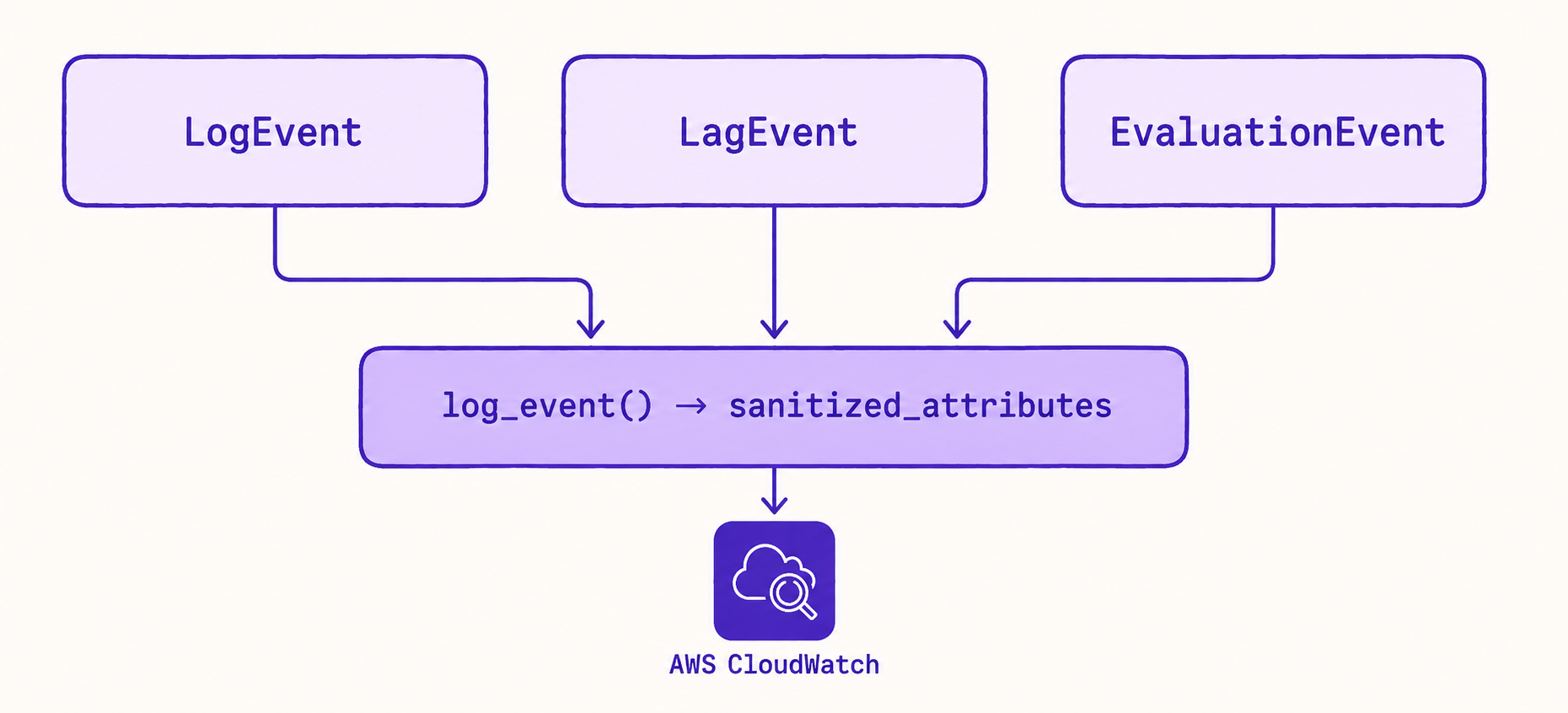

With spans under control, we turned to events. Recall the problem: LogEvent, EvaluationEvent, and LagEvent were three Pydantic models with overlapping fields, three sinks, three trace_id resolution strategies. None of them was wrong. They had simply grown without coordination.

The fix was a sibling pattern, borrowed in spirit from Fowler's domain-event catalog and from Anthropic's writing on agent event emission. Instead of merging the three models into one mega-model (which creates a god-object that nobody owns) or keeping them separate (status quo), we declared all three to be siblings of LogEvent. Each retains its own typed fields. Each flattens into a single LogEvent.sanitized_attributes dictionary on the way out. The transport — the function called log_event() — accepts any sibling and routes through one path.

# Sibling event pattern

class LogEvent(BaseModel):

timestamp: datetime

level: str

message: str

sanitized_attributes: dict[str, Any] = Field(default_factory=dict)

class LagEvent(BaseModel):

"""Latency event — sibling of LogEvent."""

timestamp: datetime

operation: str

duration_ms: float

bucket: str # "fast" | "normal" | "slow"

class EvaluationEvent(BaseModel):

"""Eval verdict event — sibling of LogEvent."""

timestamp: datetime

rubric: str

score: float

verdict: str # "pass" | "soft_fail" | "hard_fail"

def emit_lag_event(event: LagEvent) -> None:

log_event(LogEvent(

timestamp=event.timestamp,

level="INFO",

message=f"lag.{event.operation}",

sanitized_attributes={

"lag.duration_ms": event.duration_ms,

"lag.bucket": event.bucket,

},

))Adding a new event category — say, SecurityEvent for prompt injection detection results — is now a four-step recipe documented in evaluation_observability_howto.md: declare a new sibling Pydantic model, write a flatten_ helper, write an emit_ wrapper that calls log_event(), and add the new attribute keys to ALLOWED_SPAN_ATTRIBUTES. No new sink. No new transport. The data lands in the same query layer the rest of the events land in.

The sibling pattern is the single most important decision in the charter. It accepts that different categories of events have legitimately different shapes — latency events care about duration buckets, eval events care about rubrics, log events care about levels — without letting that diversity fork the transport layer. The categories diverge at the model; they converge at the sink. This is the inverse of how we'd accidentally built the system, and once we'd articulated it, every other consolidation followed naturally.

Phase 5: Retiring Loguru

Phase 5 sounds the smallest and was the most contested. We had loguru in roughly 40 files, mostly in older orchestrator code. The bridge that re-routed loguru into stdlib worked, mostly. The argument for keeping it was inertia. The argument for retiring it was that every time someone added a new file using from loguru import logger, the next person to refactor that area had to figure out which logger they were dealing with.

We chose retirement. The migration was mechanical — replace from loguru import logger with logger = logging.getLogger(__name__), replace logger.info(f"foo {x}") with logger.info("foo %s", x), fix the handful of cases where loguru's structured fields had been used. About a day of work plus a day of fixing tests that depended on loguru's specific format.

The interesting part is what we did to prevent re-introduction. We don't have a CI gate that lints imports — those gates produce noisy false positives every time someone refactors. Instead we have a single guard test:

# tests/unit/core/test_logging_config.py

def test_loguru_bridge_removed_from_logging_config():

"""Importing loguru must fail loudly. If this test starts failing,

someone re-added the bridge. Reject the PR; do not 'fix' the test."""

with pytest.raises(ImportError):

from loguru import logger # noqa: F401The test asserts that loguru is uninstalled. If a developer adds loguru to pyproject.toml for any reason, the test fails on first run with a comment that explains the rule. This is a pattern we use elsewhere — guard tests for invariants that aren't naturally enforceable in a linter — and it's been more durable than CI rules. The test is grep-able, the failure message is human-readable, and there's no magical configuration to forget.

Phase 6: One Redaction Policy

By Phase 6 we had one span seam and one event transport, but redaction was still a per-emitter concern. The orchestrator had its own redact_args implementation in app/orchestrator/agents/events/redaction.py. The HTTP layer had a separate redactor for request bodies. The eval pipeline had a third one for snapshot fixtures. Three implementations, three regex sets, three teams arguing about whether customer_id was sensitive (one said yes, two said no).

We chose the most paranoid implementation as canonical, moved it to app/core/redaction.py, and made the other two import from it. The canonical surface is small:

# app/core/redaction.py — the only redaction surface

SENSITIVE_KEY_RE: re.Pattern # matches *email*, *_id, *secret*, *token*, *key*

def redact_args(args: dict[str, Any]) -> dict[str, Any]:

"""Replace sensitive values with their redacted form."""

def summarize_payload(payload: Any, max_chars: int = 200) -> str:

"""Type-aware short summary; never returns raw content."""The orchestrator-side redaction.py still exists today as a backward-compat shim — it re-exports from app/core/redaction so that existing imports keep working. The shim is documented as planned-for-deletion, and a follow-up phase will retire it once the last few imports are migrated. This is one of those cases where leaving a shim is correct: the cost of leaving it is one extra file; the cost of a coordinated rename across forty files is a large PR that's painful to review and risky to land. Shims that explicitly mark themselves as transitional are not technical debt, they're a deliberate phasing tactic.

Phase 7: W3C-Canonical Trace IDs

The seventh phase fixed a quieter but more pervasive bug. Across the codebase, multiple places were minting trace IDs — usually str(uuid.uuid4()) — at the boundary they cared about. The orchestrator minted one. The MCP gateway minted one. The HTTP middleware minted one. None of them agreed.

The OpenTelemetry-native answer is that trace IDs come from the active span — W3C Trace Context defines a 32-character hex string for the trace and a 16-character hex string for the span, and OTel propagates these through the standard traceparent header. The fix was to define a single function:

# app/core/observability.py

def current_trace_ids() -> tuple[str, str]:

"""Return (trace_id_hex, span_id_hex) from the active OTel span.

Returns ('', '') if no span is active. Callers must be inside a span context.

"""

span = trace.get_current_span()

ctx = span.get_span_context()

return (

format(ctx.trace_id, '032x'),

format(ctx.span_id, '016x'),

)And then to delete every uuid.uuid4() trace_id call site. The orchestrator's parallel trace_context.py module — which had become a substantial subsystem with its own propagation logic — was deleted entirely. The MCP gateway switched to reading traceparent from incoming headers. The HTTP middleware hands the trace_id to the OTel context and stops minting its own.

The cleanup unlocked a feature we'd been wanting: cross-system trace correlation. A workflow that crosses the FastAPI boundary, the MCP boundary, the LLM gateway, and the evaluation pipeline now shares one trace_id end-to-end. You can paste the hex string into CloudWatch Logs Insights and pull every event for that workflow regardless of which subsystem emitted it. Before the charter, this required a SQL join across three tables on three different ID columns and an apologetic Slack message about the 12% of events with null trace_ids. After the charter, it's one query.

Putting It Together: Adding a New Evaluator

The clearest test of the charter is whether adding a new piece of telemetry feels easy. Suppose we want to instrument a new evaluator — say, a "factuality" rubric that checks whether agent outputs contain unsupported claims. Pre-charter, this would have meant deciding which event model to extend, which sink to write to, which redaction to apply, and how to thread trace IDs through. Post-charter, the recipe is six lines of code:

# 1. Declare new attribute keys in ALLOWED_SPAN_ATTRIBUTES

ALLOWED_SPAN_ATTRIBUTES |= {

"eval.factuality.score",

"eval.factuality.unsupported_claim_count",

}

# 2. Use the existing EvaluationEvent sibling — no new model needed

emit_evaluation_event(EvaluationEvent(

timestamp=datetime.utcnow(),

rubric="factuality",

score=score,

verdict="pass" if score >= 0.85 else "soft_fail",

))

# 3. Add a span attribute on the eval node

record_node_attributes(

node_name="evaluator",

eval_factuality_score=score,

eval_factuality_unsupported_claim_count=count,

)Trace IDs propagate automatically — the EvaluationEvent emitter calls current_trace_ids() internally. Redaction applies automatically — record_node_attributes runs values through redact_args. Sink routing is automatic — emit_evaluation_event flattens through log_event(). The new evaluator's telemetry is queryable in the same dashboards as everything else, with the same trace correlation, on its first deploy.

This is the observable difference the charter is supposed to make. The first evaluator we added pre-charter took two days of integration plumbing and produced telemetry that didn't show up correctly in dashboards for a week. The first evaluator added post-charter took twenty minutes and was immediately queryable.

Five Lessons from Doing This

If you're staring at your own observability stack and recognizing the symptoms — three transports, a loguru bridge, scattered tracer.start_span calls, fights over redaction — these are the lessons that took us the longest to internalize.

1. Charter before code. The thing we did right was naming the project a "charter" rather than a refactor. A charter is permitted to delete things. A refactor is expected to preserve behavior. The seven phases all delete code; that's the point. If we'd framed this as a refactor, we'd still have the loguru bridge.

2. Allowlists, not denylists. An allowlist of 72 attribute keys is small, finite, and grep-able. A denylist of "high cardinality keys we noticed" is a list that grows whenever someone adds a new bug. OTel's attribute naming guide hints at this; Honeycomb's book argues it explicitly. Adopt the discipline early — adding the allowlist after a year of unconstrained attributes means a long migration to remove existing high-cardinality keys.

3. Siblings, not subclasses. The single biggest design choice in the charter was treating LagEvent and EvaluationEvent as siblings of LogEvent, not subclasses. Subclasses imply an "is-a" relationship that pulls inheritance into queries, fan-out into transports, and confusion into ownership. Siblings can have different fields, share a transport, and be owned by different teams. If you're tempted to subclass an event model, name a sibling instead.

4. Guard tests beat lint rules. The loguru ImportError test is more durable than any linter we tried. Linters get suppressed, configurations rot, and the next developer assumes the rule was an accident. A guard test fails loudly with a human-readable message that explains the rule. We now use guard tests for several invariants — no tracer.start_span outside the seam, no uuid4 in trace_id contexts, no Pydantic BaseModel imports in the agent runtime — and they outlast linter configs.

5. Retire the parallel pathway, don't bridge it. Bridges are seductive because they let you defer the hard decision. Loguru-to-stdlib, parallel-trace-id-to-OTel, three-event-models-to-one — every bridge we added was supposed to be temporary. None retired itself. The pattern that worked was: do the migration, then make the old pathway physically impossible (uninstall the package, delete the module). Bridges encourage drift; deletions enforce convergence.

External Pointers Worth Reading

Several pieces of writing materially shaped how we thought about this work. They're worth your time if you're staring at similar problems.

OpenTelemetry's Semantic Conventions for Generative AI is the closest thing the industry has to a public allowlist. Adopting their key names directly (gen_ai.request.model, gen_ai.usage.input_tokens) saves you from inventing your own and lets you connect cleanly to any vendor that follows the spec.

Anthropic's "Building effective agents" doesn't focus on observability, but the implicit position throughout — that agents emit structured, interpretable events as a primary engineering artifact — is the right starting frame. If you treat events as first-class output rather than diagnostic noise, the rest of the charter follows.

Honeycomb's "Observability Engineering" book, particularly chapters 4 (Cardinality) and 12 (How Observability Differs from Monitoring), is the reference text. The cardinality argument is what justifies the allowlist; the cost-of-cardinality math is what gets the charter past leadership.

AWS's writing on Embedded Metric Format (EMF) is the canonical reference for the technique we use to surface latency histograms in CloudWatch without paying for custom metrics. Article 2 in this series goes into how we use it in detail; if you're on AWS, EMF is the cheap, native way to get histograms.

LangChain's LangSmith tracing schema is worth studying as a counterexample. LangSmith doesn't gate keys, which makes it spectacular for ad-hoc debugging and expensive at scale. We use both — LangSmith in development for its rich UX, our gated charter in production for its predictable cost. Reading their schema sharpened our thinking about which discipline goes where.

Observability is not a feature you add to a platform. It's a discipline the platform enforces on itself. The platform gets to be opinionated about what gets emitted, who owns each transport, and where the trace ID comes from. Get that right and the dashboards build themselves.

What's Next

Beta v0.1.0 is the consolidation. Beta v0.2.0, the next post in this series, is what the charter unlocked: turn-level LLM latency, tool-level MCP latency, retry classification, multi-agent traceability, and a Sentry v8-to-v10 migration that exposed a DSN URL guard we should have had since day one. None of that work was possible while three transports were doing one job. With the charter in place, every new instrumentation we ship lands in the right tables, with the right trace IDs, redacted by the right policy, on its first deploy.

The broader trajectory is to make every reasoning step the agent takes as inspectable as a traditional function's execution. The charter is the seam that makes that possible. Article 2 is what we built on top of it.