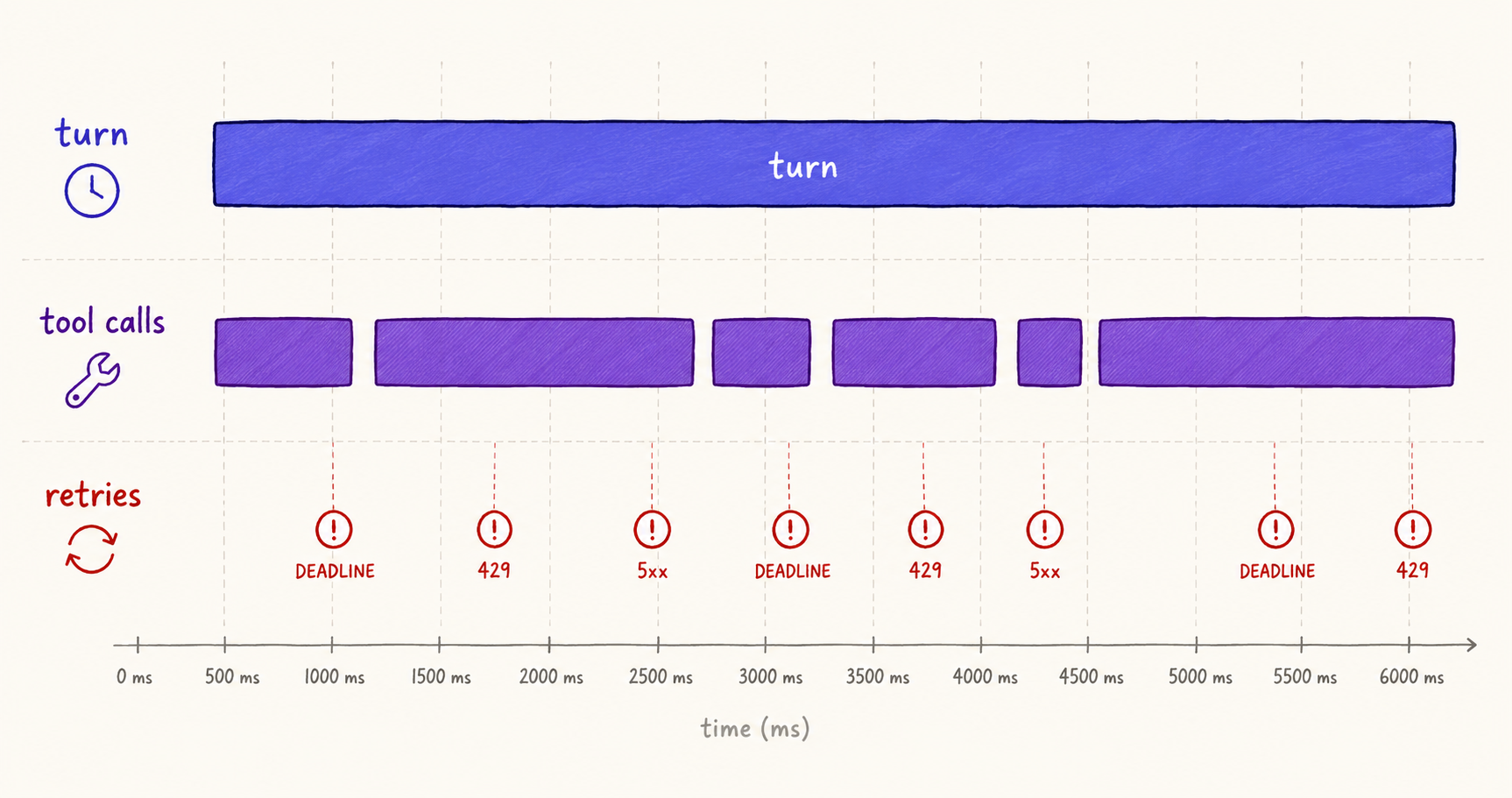

If the previous post was about discipline — collapsing three transports into one seam — this one is about the visibility the discipline bought us. With every span flowing through one allowlist and every event flattening into one transport, we could finally instrument the things we'd been guessing at for months: how long does a single LLM turn actually take, broken out by model, broken out by tool calls inside the turn? When the system retries, what is it retrying — a deadline, a 429, a 5xx, a model overload? When a multi-agent supervisor hands off to a specialist, do retries on the specialist count against the supervisor's budget?

The answers, as it turned out, were boring in the best possible way. Our p50 turn latency was fine. Our p99 was being eaten by tool calls — specifically, two tools that were doing synchronous work that should have been async. Our retry rate was low, but the small fraction we did retry was almost entirely model_overloaded — Anthropic's 529 Overloaded response — and the right action there is fallback to a different model, not exponential backoff. Boring answers, but only because we'd built the instrumentation to expose them.

This article walks through the four pieces of work that landed in PRs #251, #255, #256, and #262: per-turn latency, per-tool latency, retry classification, multi-agent HandoffRecord, and the Sentry v8-to-v10 migration that closed an SSRF-class bug we hadn't spotted.

The Agent-Level Latency Blind Spot

The first version of latency instrumentation we shipped tracked one number per agent invocation: time from request received to response sent. It's a useful number for SLA dashboards. It is almost useless for diagnosis.

Here's why. A Goal Lane workflow is a sequence of model turns interleaved with tool calls. A single turn might call three tools — extract text, classify content, draft summary — and each tool might take anywhere from 50ms to 30 seconds depending on file size and downstream services. The model itself takes anywhere from 800ms to 8 seconds depending on input length and output length. The total agent latency is the sum, but the distribution of where the time went is the diagnostic signal.

When agent-level p99 spikes from 12s to 28s, the spike is real, but there are a dozen things that could cause it. Was the model slow today? Was the cache hit rate down? Was a tool stuck on a slow upstream? Was the agent hitting more iterations because the model was being indecisive? You can't tell from the p99 number alone. You need turn-level latency and tool-level latency emitted as their own histograms.

This is the same argument Google's SRE book makes about request distributions: an aggregate latency number is the worst possible monitoring signal because it averages signal away. The book argues for histograms with explicit p50/p95/p99 cuts and for breaking those out by every dimension that affects latency. For agents, those dimensions are model, tool name, and turn index.

EMF: Histograms Without the Custom Metric Bill

The technique we use to surface these histograms is Embedded Metric Format (EMF) — a JSON convention CloudWatch recognizes when it appears in log lines. Emit a structured log record with a _aws.CloudWatchMetrics block and CloudWatch ingests it as a metric, with full histogram aggregation, at log-ingestion prices instead of custom-metric prices.

# What gets written to CloudWatch logs (truncated)

{

"_aws": {

"Timestamp": 1715600000000,

"CloudWatchMetrics": [{

"Namespace": "Convilyn/AgentTurn",

"Dimensions": [["model", "tool_name"]],

"Metrics": [

{"Name": "tool_latency_ms", "Unit": "Milliseconds"}

]

}]

},

"model": "claude-opus-4-7",

"tool_name": "extract_resume_profile",

"tool_latency_ms": 412,

"trace_id": "0af7651916cd43dd8448eb211c80319c"

}The dimension list — [["model", "tool_name"]] — declares the cuts CloudWatch should aggregate by. We can ask "p99 of tool_latency_ms where model='claude-opus-4-7' and tool_name='extract_resume_profile'" and get a real histogram, not a sampled approximation. The cost is one log record per emission instead of one custom metric per data point. For a system emitting tens of thousands of latency samples per hour, the price difference is two orders of magnitude.

The trick that made EMF integrate cleanly with our charter is that emitting an EMF record is just calling emit_lag_event with the right shape. The LagEvent sibling we introduced in Phase 3 already had operation, duration_ms, and bucket fields. The flatten step just adds the _aws block and writes one log line. No new transport. No second sink. The same trace_id propagation we built for events propagates to EMF records for free.

One caveat worth naming. EMF dimensions are themselves cardinality-sensitive — adding a high-cardinality dimension like user_id to a histogram explodes the metric stream. We only emit EMF records with dimensions from ALLOWED_SPAN_ATTRIBUTES, the same allowlist that gates spans. The discipline in the charter pays off twice: cardinality is bounded for spans and for the EMF metrics derived from the same data.

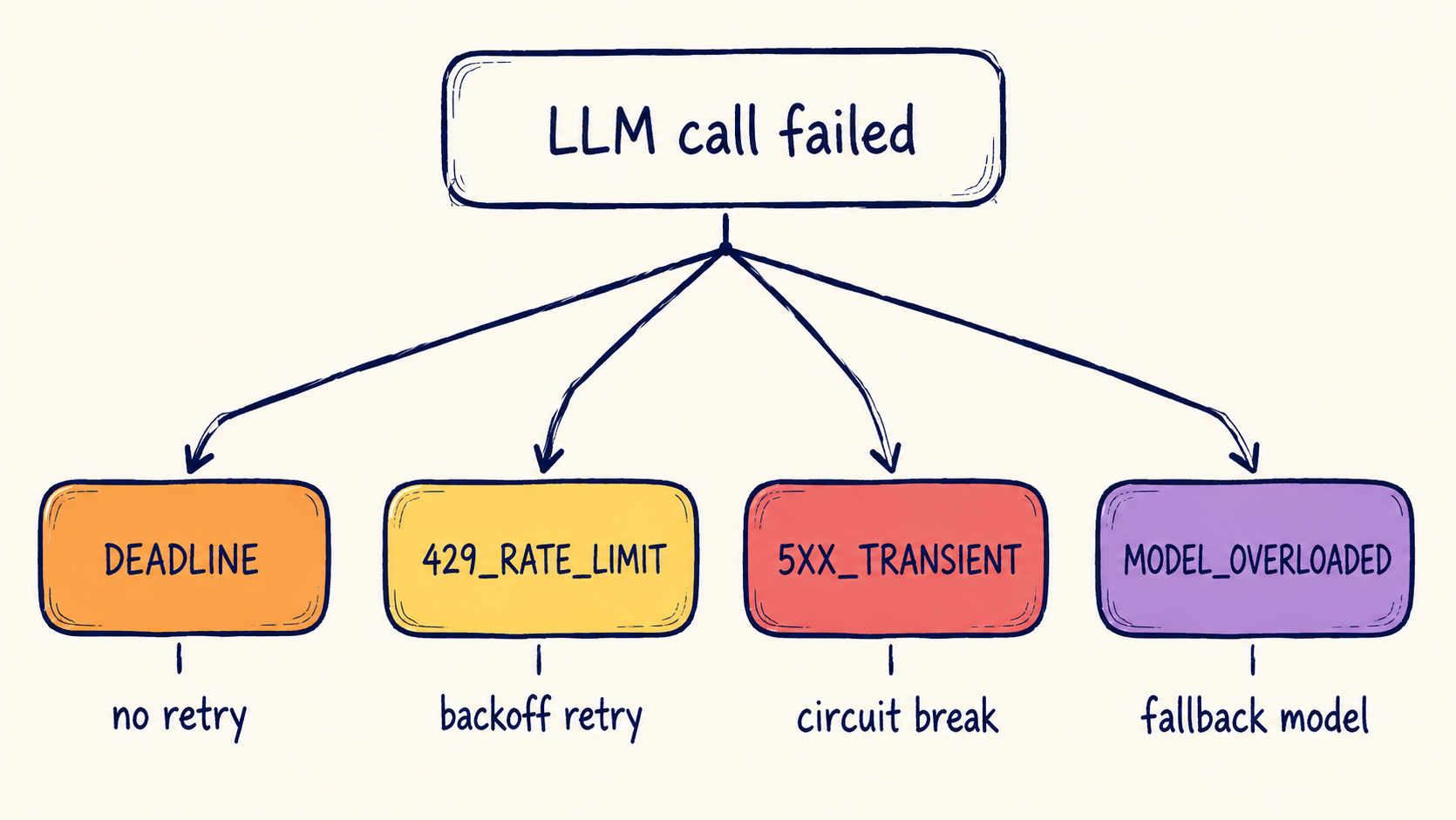

Retry Classification: Four Reasons, Four Actions

The retry layer used to be one boolean. Either we retried or we didn't, and "retry rate" was a single percentage on a dashboard. The problem with that aggregate is that different reasons for retrying require different actions, and lumping them produces both false alarms (any spike in 429s looks identical to any spike in 5xxs) and false silences (a slowly-rising rate of model_overloaded gets averaged out by a healthy steady stream of 5xx-class transients).

The four categories we settled on are stolen, in spirit, from Anthropic's errors and rate limits documentation, with one addition for our own deadline budget:

| Category | Cause | Action | Backoff |

|---|---|---|---|

DEADLINE | Workflow exceeded its time budget | No retry; fail terminally | — |

429_RATE_LIMIT | Token-bucket exhausted | Retry after Retry-After header | Server-directed |

5XX_TRANSIENT | Provider transient error | Retry with exponential backoff | 250ms × 2^n, jittered |

MODEL_OVERLOADED | Anthropic 529 / OpenAI capacity_exceeded | Switch model; do not retry same model | Immediate fallback |

The crucial split is the last row. A 529 from a frontier model isn't a transient — it's a load-shedding signal. Retrying the same model with backoff makes the problem worse. The right action is to fall back to a different model (typically a smaller variant or a different provider) and emit a ModelFallbackEvent so we can see the fallback rate on a dashboard. OpenAI's production best practices recommend the same pattern under a different name; it's the kind of operational lore that's obvious in hindsight and expensive to learn the hard way.

# Retry classification (simplified)

def classify_retry(exc: Exception, deadline_remaining_ms: int) -> RetryCategory:

if deadline_remaining_ms <= 0:

return RetryCategory.DEADLINE

if isinstance(exc, RateLimitError):

return RetryCategory.RATE_LIMIT_429

if isinstance(exc, ModelOverloadedError):

# Anthropic 529, OpenAI capacity_exceeded

return RetryCategory.MODEL_OVERLOADED

if isinstance(exc, (httpx.HTTPStatusError, ConnectionError)):

if 500 <= getattr(exc, 'status_code', 0) < 600:

return RetryCategory.TRANSIENT_5XX

raise # not a retry-eligible errorEach classification emits a structured RetryEvent sibling — same trace_id propagation, same allowlist discipline, same sink. A dashboard panel shows retry rate by category. An alert fires only on MODEL_OVERLOADED exceeding a threshold, because that's the category that actually requires human action (do we expand model fallback options?). The other three categories are auto-mitigated and only need attention if the rates change shape.

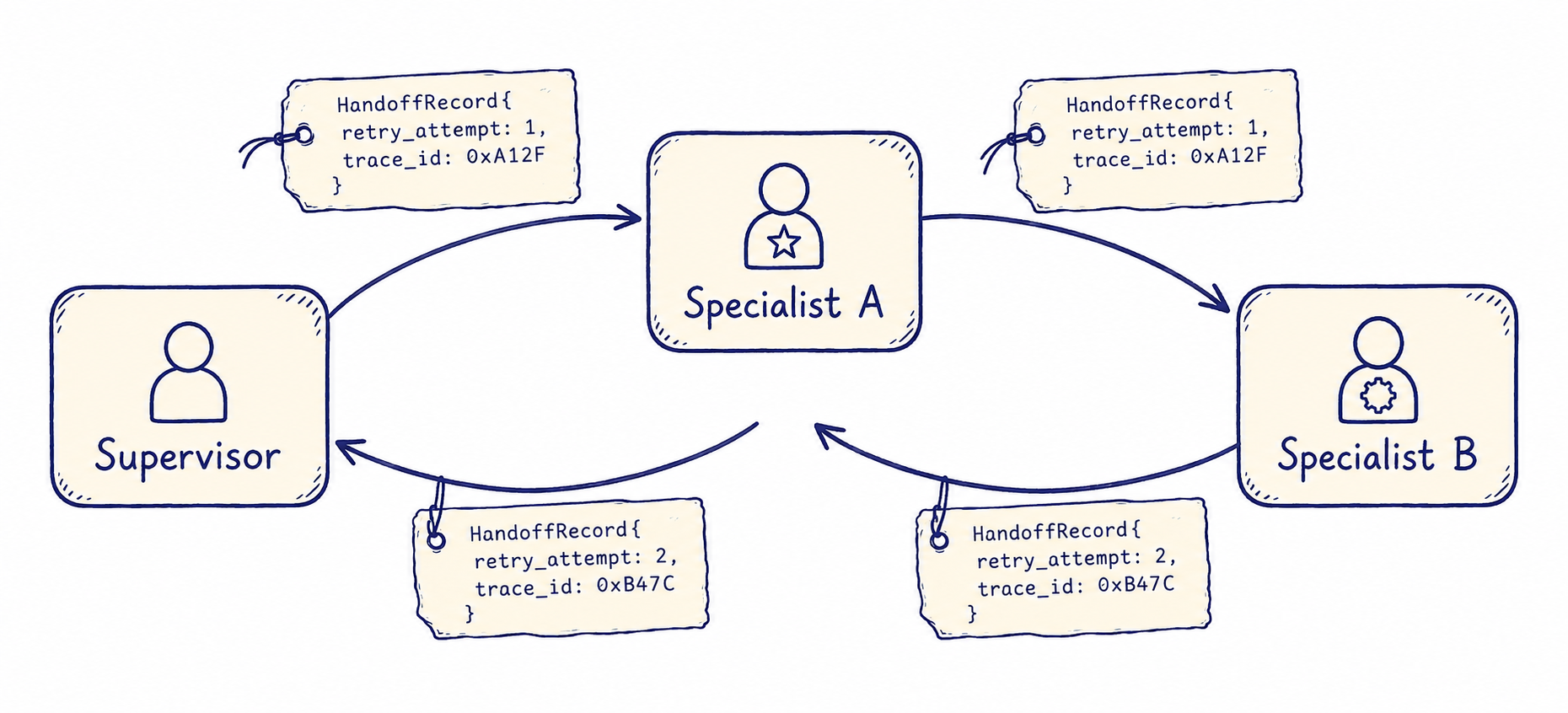

Multi-Agent Handoff: Whose Retry Budget Is It?

The trickiest piece was multi-agent. When a supervisor agent hands off to a specialist (say, a planner agent delegates a research task to a search-specialist), the specialist might retry independently. Whose retry budget owns those retries?

Pre-charter, the answer was "neither knows about the other." The supervisor saw a successful response from the specialist; the specialist's retries were invisible in the supervisor's trace. Debugging "why is this workflow slow?" required cross-correlating two traces by hand — exactly the kind of work the unified trace_id strategy was supposed to eliminate.

The fix was a HandoffRecord attached to every agent-to-agent transition:

class HandoffRecord(BaseModel):

from_agent: str

to_agent: str

retry_attempt: int # 0 on first call; bumps on parent-side retries

parent_trace_id: str # so child traces can join back

parent_span_id: str

handoff_reason: str # "delegate" | "fallback" | "retry"

deadline_ms: int # remaining workflow budgetThe specialist receives the HandoffRecord as part of its input envelope. Its own spans tag every emitted attribute with handoff.from_agent, handoff.retry_attempt, and handoff.deadline_ms, so any query that asks "show me all spans for trace X" gets a coherent end-to-end picture across both agents. The specialist's retries are visible. The supervisor's retries against the specialist are visible. The deadline budget is shared and decremented through the handoff.

This pattern lines up with how Anthropic and OpenAI have been talking about multi-agent observability in their public writing — Anthropic's research on multi-agent systems emphasizes "trajectory transparency" across agent boundaries, and OpenAI's Assistants API has been moving toward making sub-agent runs queryable from the parent run. The names differ; the structure converges on the same idea: every cross-agent boundary carries enough context to reassemble the full trajectory after the fact.

Sentry v8 to v10: A Migration That Surfaced Real Bugs

The Sentry migration sounds, on paper, like the most boring item in this article. It's a dependency bump. The 9.x line had bridged most of the v8 patterns; v10 finished the job with a factory-style API. We had to rewrite our initialization from a constructor to a factory call. End of story.

Except the migration also forced us to look at how we were configuring the SDK, and that surfaced a real bug. The way we'd been initializing Sentry pulled the DSN from an environment variable and passed it directly into sentry_sdk.init(dsn=...) with no validation. If the env var was unset, we got a quiet warning. If the env var contained a typo (a real DSN pointing to a different project), we got events sent to the wrong place. If the env var was somehow externally controllable — and one of the security review questions on PR #262 asked exactly this — we'd be sending exception traces and request bodies to whatever URL the attacker chose. OWASP's SSRF entry describes the failure mode; Sentry's own docs mention DSN validation but don't enforce it.

The fix was a one-screen guard:

# app/core/sentry_init.py

ALLOWED_SENTRY_HOSTS = frozenset({

"o000000.ingest.sentry.io",

"o000000.ingest.us.sentry.io",

})

def init_sentry() -> None:

dsn = os.environ.get("SENTRY_DSN", "").strip()

if not dsn:

logger.info("SENTRY_DSN not set; Sentry disabled")

return

parsed = urlparse(dsn)

if parsed.scheme != "https":

raise ValueError("SENTRY_DSN must use https scheme")

if parsed.hostname not in ALLOWED_SENTRY_HOSTS:

raise ValueError(

f"SENTRY_DSN host {parsed.hostname!r} not in ALLOWED_SENTRY_HOSTS"

)

sentry_sdk.init(dsn=dsn, ...)The allowlist is two hosts. Adding a new one is a one-line PR. The cost is negligible; the bug it closes is the kind of thing that would have looked terrible in a postmortem if it had ever fired. The general lesson — and the one we filed an internal bug-prevention note about — is that any external URL the application points at deserves an allowlist guard, not just URLs you fetch from. Outbound telemetry is a request like any other.

The factory API itself was the easier part. Sentry v10's sentry_sdk.init is constructed once at process boot and idempotent on subsequent calls; the constructor pattern in v8 had ambiguous behavior on re-init. The factory pattern means our test fixtures can replace the Sentry client cleanly without monkey-patching, which removed about thirty lines of test scaffolding we'd been carrying since 2024.

An Open Question: Adaptive Sampling

One thing we haven't fully solved is sampling. Right now we sample spans at a fixed rate (10% in production), which is the simplest configuration that keeps storage costs bounded. The downside is that rare events — a workflow that hits the deadline category, a model fallback, a slot-validation failure — are subject to the same 10% sample as common events. A bug that fires once in a hundred runs is therefore visible in only ten of a thousand traces.

The right answer is tail-based sampling: keep all traces that contain anomalous spans (errors, deadlines, fallbacks) at 100%, and sample the rest at a much lower rate. OpenTelemetry's tail-sampling collector supports this directly, but introducing it requires a separate processor in the pipeline and a non-trivial bit of operational work. PR #257 has the design proposal; we expect to ship it in Beta v0.3 or v0.4.

Until then, we have an in-process workaround: spans tagged with any of retry_category, fallback, error, or deadline are forced to 100% sampling regardless of the global rate. It's not as principled as tail-based sampling — the decision is made at span-start, not span-end — but it captures the high-signal cases at acceptable cost.

Four Lessons from Doing This

1. Aggregate latency hides the signal you actually need. Agent-level p99 doesn't tell you whether the model got slower or whether a tool got slower. Instrument turn-level and tool-level latency from day one — the cost of doing it later is migrating dashboards and re-training the on-call rotation, which is a tax you'll pay forever.

2. EMF is the cheap way to do histograms on AWS. Custom metrics are expensive and have surprising cost cliffs. EMF gives you the same query power for an order of magnitude less. The CloudWatch Metrics Insights query language is pleasant once you've used it.

3. Retry classification is operational, not statistical. The reason to classify retries is that different categories require different actions. A single "retry rate" number does not allow you to decide whether to expand model fallbacks, raise rate limits, or fix a transient downstream. Categorize first, alert per-category, only then aggregate.

4. Treat outbound telemetry URLs like inbound user input. Every URL the application uses is a potential exfiltration vector if it's externally controllable. The Sentry DSN was the one that caught us; the same allowlist pattern now guards every outbound integration we have. OWASP Top 10 2021 already calls SSRF out as A10; the lesson is to apply the framing to your own outbound URLs, not just the ones you fetch on user request.

External Pointers Worth Reading

AWS Embedded Metric Format Specification — the canonical reference. The spec is short. The aws-embedded-metrics library exists if you'd rather not hand-write the JSON.

Anthropic API Errors and Rate Limits — the canonical taxonomy of 4xx and 5xx responses, including the 529 Overloaded distinction. Read this before designing your retry classifier.

OpenAI Production Best Practices: Improving Latencies — the model-fallback recommendation comes from here. Worth reading alongside the Anthropic page; the two providers converge on the same operational pattern under different vocabulary.

Google SRE Book: Monitoring Distributed Systems — chapter 6 is the canonical statement of why aggregates hide signal and why you want histograms with multiple cuts. The framing is general; it applies to agents directly.

Sentry Python SDK Migration Guide — covers the v8-to-v10 changes in detail. The factory API is the part to understand; everything else is mechanical.

Aggregate dashboards tell you the system is in trouble. Per-category instrumentation tells you what to do about it. The discipline is to refuse the comfort of a single rate and force yourself to attach a verb to every category.

What's Next

Beta v0.2.0 closes out the observability arc. The next two posts in the series move to the evaluation framework — what we built once we could see the system clearly. Beta v0.3.0 covers the baseline-and-gate axis system that turned manual evaluation into automated regression detection. Beta v0.4.0 covers TrajectoryJudge, the multi-step evaluator, and the nightly job that auto-files GitHub issues when a trajectory drifts.

The thread that connects them is the seam from Beta v0.1.0. Every evaluator emits an EvaluationEvent sibling. Every gate verdict propagates through the same trace ID. Every PR comment uses the same redaction policy. The plumbing is invisible because the charter made it invisible. That's the test of whether observability is good — you stop noticing it.