Eyeballing LLM output is the most expensive engineering practice you didn't know you had. Every prompt change, every model swap, every retrieval tweak invites the same question: did this make things better, worse, or about the same? The cheap-feeling answer is to spot-check three or four outputs by hand and trust your instincts. The actual cost is that you ship regressions you didn't notice, you avoid changes you can't quickly verify, and the team's velocity gets quietly throttled by the friction of "let me eyeball that real quick."

This post is about how we got off the eyeball treadmill. The mechanism is a baseline-and-gate axis system that runs in CI, posts pass/fail verdicts on every PR, and lets us promote the current snapshot to baseline with a single CLI flag when we're confident a change is intentional. The structure was influenced by three external bodies of work: OpenAI Evals (the open-source library and cookbook entries), Anthropic's writing on evaluating Claude, and Stanford CRFM's HELM framework — which we read for its taxonomy of metric axes more than for its specific implementations.

By the end of this post you'll know what we built, what we'd do differently if we started over, and where the design still has open edges.

The Manual Eval Treadmill

The pre-charter state of evaluation looked roughly like this. We had a directory of tests/eval/ harnesses that you could run locally with pytest -m eval. Each test executed a workflow against a fixed input and printed the model's output. Whether the output was "good" was determined by an engineer reading it.

This worked when the team was three people on one workflow. It broke when we had eight workflows and twelve people. The breaking pattern was always the same: someone tweaked a prompt to fix a specific edge case, ran the eval, eyeballed three outputs, decided they looked fine, shipped the change, and a week later we discovered that the tweak had quietly broken a different edge case nobody happened to look at. The fix-then-regress-elsewhere cycle is the canonical anti-pattern that Anthropic's own evaluation writing argues against — they call it "playing whack-a-mole on benchmarks" — but knowing the anti-pattern by name doesn't help if you don't have the infrastructure to escape it.

Three things were missing. We had no baseline — no canonical "what good looks like" snapshot to compare against. We had no gate — no automated decision about whether the current state is acceptable. And we had no signal in CI — the eval ran on developer laptops, when remembered, with results that didn't appear on the PR. The fix was to add all three and make them mandatory.

Two Axes: Baseline and Gate

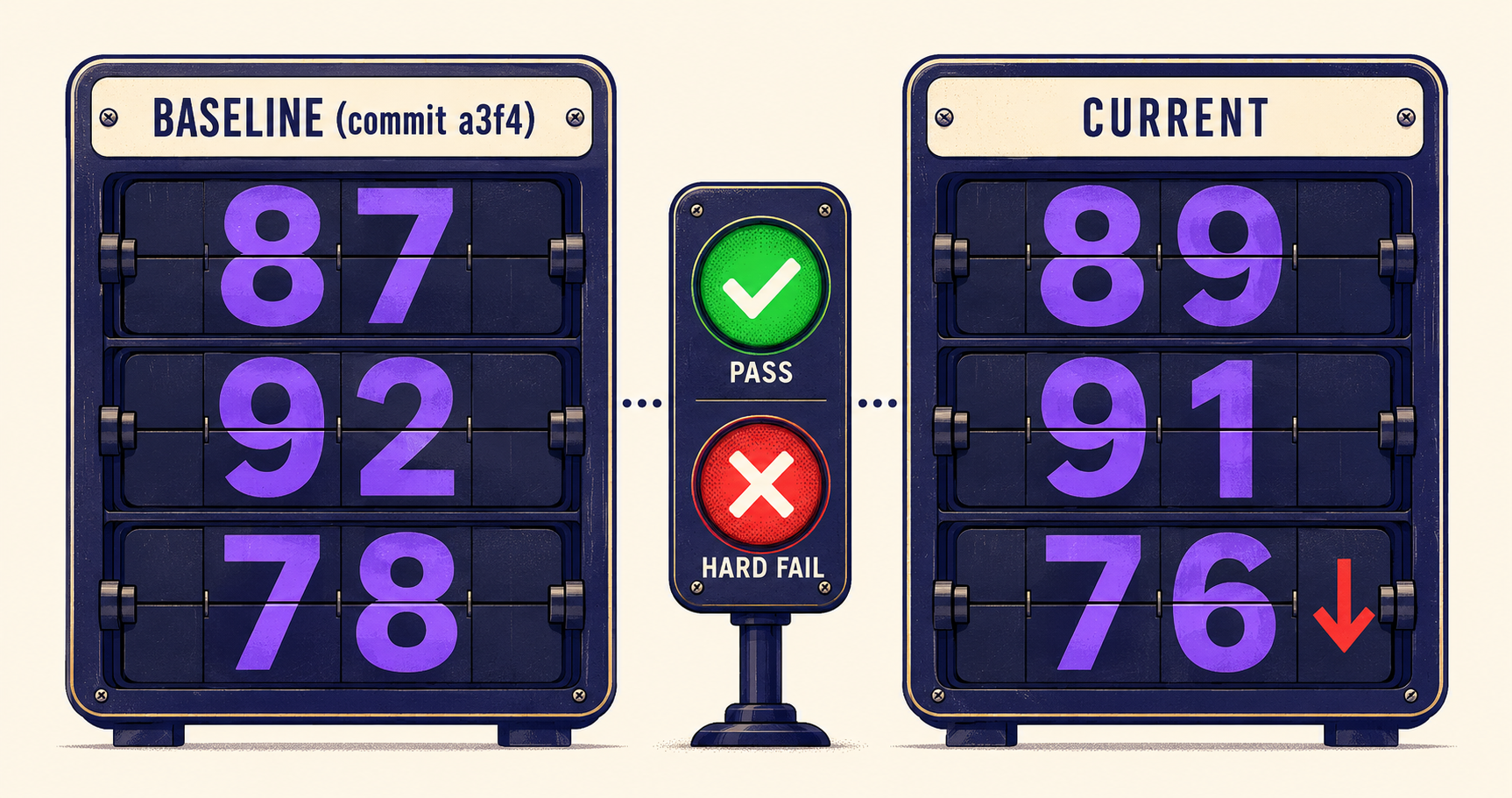

The core abstraction is two orthogonal axes. The first axis is which version of the workflow are we measuring — current vs baseline. The second axis is what verdict do we render — pass, soft-fail, or hard-fail. Plotting the two axes against each other gives you the full state space of a CI eval run.

| Verdict | Meaning | CI Effect |

|---|---|---|

PASS | Current ≥ baseline on every gated rubric | Green check; PR can merge. |

SOFT_FAIL | One non-load-bearing rubric regressed | Yellow warning; PR can merge with reviewer ack. |

HARD_FAIL | A load-bearing rubric regressed | Red block; PR cannot merge until current is updated or baseline is intentionally promoted. |

The split between soft-fail and hard-fail is the load-bearing decision. Some rubrics are critical — groundedness on a workflow that handles legal documents, factuality on a workflow that produces resume claims — and any regression on these is a hard stop. Other rubrics are stylistic — formatting consistency, brevity — and a regression there is worth flagging but not blocking. The rubric configuration declares its severity once; the gate decision flows mechanically from the comparison.

# tests/eval/rubrics/registry.yaml

rubrics:

groundedness:

description: "Every claim in the output is supported by the input."

severity: hard # regression blocks PR

threshold: 0.85 # absolute floor; below this, hard-fail regardless of baseline

factuality:

description: "No fabricated facts; refuses to invent when uncertain."

severity: hard

threshold: 0.90

brevity:

description: "Output is concise; no unnecessary repetition."

severity: soft # regression is a warning, not a block

tone_consistency:

description: "Output matches the requested tone register."

severity: softThis is the same shape the HELM framework uses, just smaller. HELM evaluates models across seven axes — accuracy, calibration, robustness, fairness, bias, toxicity, efficiency — and treats each axis as independently reportable. We have fewer axes (we're evaluating workflows, not foundation models), but the principle is the same: don't collapse multiple dimensions into one scalar; report and gate them separately.

Fast Tier and Slow Tier: Triaging Eval Cost

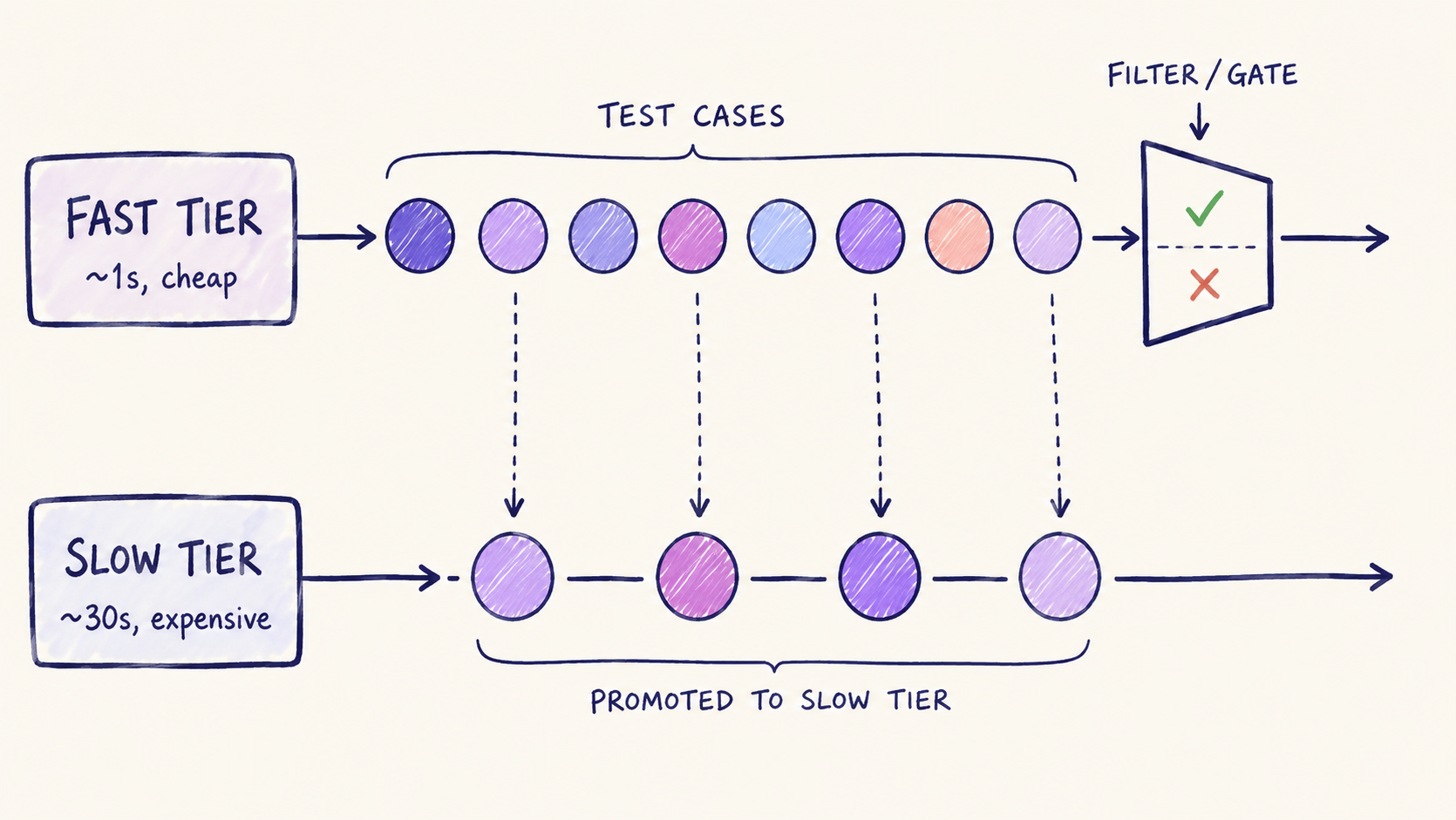

Running every eval rubric on every PR is too expensive. A full TrajectoryJudge pass on a long workflow can take 30 seconds and cost meaningful tokens. Multiplied across all rubrics on all workflows on every PR, the bill becomes a real line item.

The escape is a two-tier structure. The fast tier — cheap, runs on every PR, takes one second per rubric — uses programmatic checks and lightweight LLM-as-judge prompts. The slow tier — expensive, runs nightly or on demand — uses full TrajectoryJudge against the entire trajectory. The fast tier is the gate that blocks merges; the slow tier is the deeper signal that determines whether a workflow's design is fundamentally sound.

# Fast tier rubric — runs on every PR

class GroundednessFastJudge(LLMJudge):

"""Runs against the final output. Single LLM call. ~$0.001 per case."""

model = "claude-haiku-4-5"

max_tokens = 200

temperature = 0.0

# Slow tier rubric — runs nightly or on /eval-deep PR comment

class GroundednessTrajectoryJudge(TrajectoryJudge):

"""Runs against every step of the trajectory. ~$0.05 per case."""

model = "claude-opus-4-7"

max_tokens = 2000The fast/slow split lines up with how OpenAI documents their eval product — they distinguish "graders" (cheap, often programmatic) from "model graders" (expensive, LLM-driven). The vocabulary differs; the operational shape converges. Run cheap signals constantly, expensive signals selectively.

One pattern we developed that doesn't appear in the public references: a fast-tier rubric can promote a case to the slow tier when it returns a verdict on the boundary. If the fast judge scores a case at exactly the threshold, the case is queued for slow-tier evaluation overnight. This catches the failure mode where a fast judge is too coarse to tell whether a borderline case is actually pass or fail. The slow judge, with its larger model and trajectory access, makes the final call.

The CLI Workflow: --update-baseline

The baseline lives as a JSON file in tests/eval/baselines/, one per workflow, pinned by commit SHA. The current snapshot is whatever the eval run produces against the head of the PR branch. The gate compares them.

The interesting design decision is what happens when a regression is intentional — when you've deliberately changed the prompt and expect different behavior. The naive answer is "let the developer override the gate," but that's the path back to manual eyeballing. The better answer is to make baseline promotion an explicit, audited action.

# Run eval, see the gate fail

$ poetry run python -m eval.cli run --workflow=resume_to_jobs

[hard_fail] groundedness: 0.91 → 0.87 (baseline 0.91, threshold 0.85)

[pass] factuality: 0.94 → 0.95

[soft_fail] brevity: 0.82 → 0.75

Verdict: HARD_FAIL — see report at ./eval_report_e8a7c9.html

# After investigating and confirming the regression is intentional:

$ poetry run python -m eval.cli run --workflow=resume_to_jobs --update-baseline

Updated baseline for resume_to_jobs (commit b4f9e2)

- groundedness: 0.91 → 0.87

- factuality: 0.94 → 0.95

- brevity: 0.82 → 0.75

Re-run with no flags to confirm pass.The --update-baseline flag rewrites the JSON snapshot to match current. The new baseline is committed alongside the prompt change. The PR review now includes the baseline diff — reviewers can see exactly what numbers moved and decide whether the move is acceptable. The eval gate's verdict on the next CI run is PASS because current matches the freshly-promoted baseline.

This pattern is essentially snapshot testing applied to LLM output, with the addition that the snapshot is a structured score rather than a raw string. The same trade-offs apply: snapshots are easy to update mindlessly (so make the diff legible), the test only catches regressions you've thought to express as a metric (so write the metrics carefully), and there's no protection against everyone agreeing to make the system worse (so use the diffs as a discussion artifact, not just a passing test).

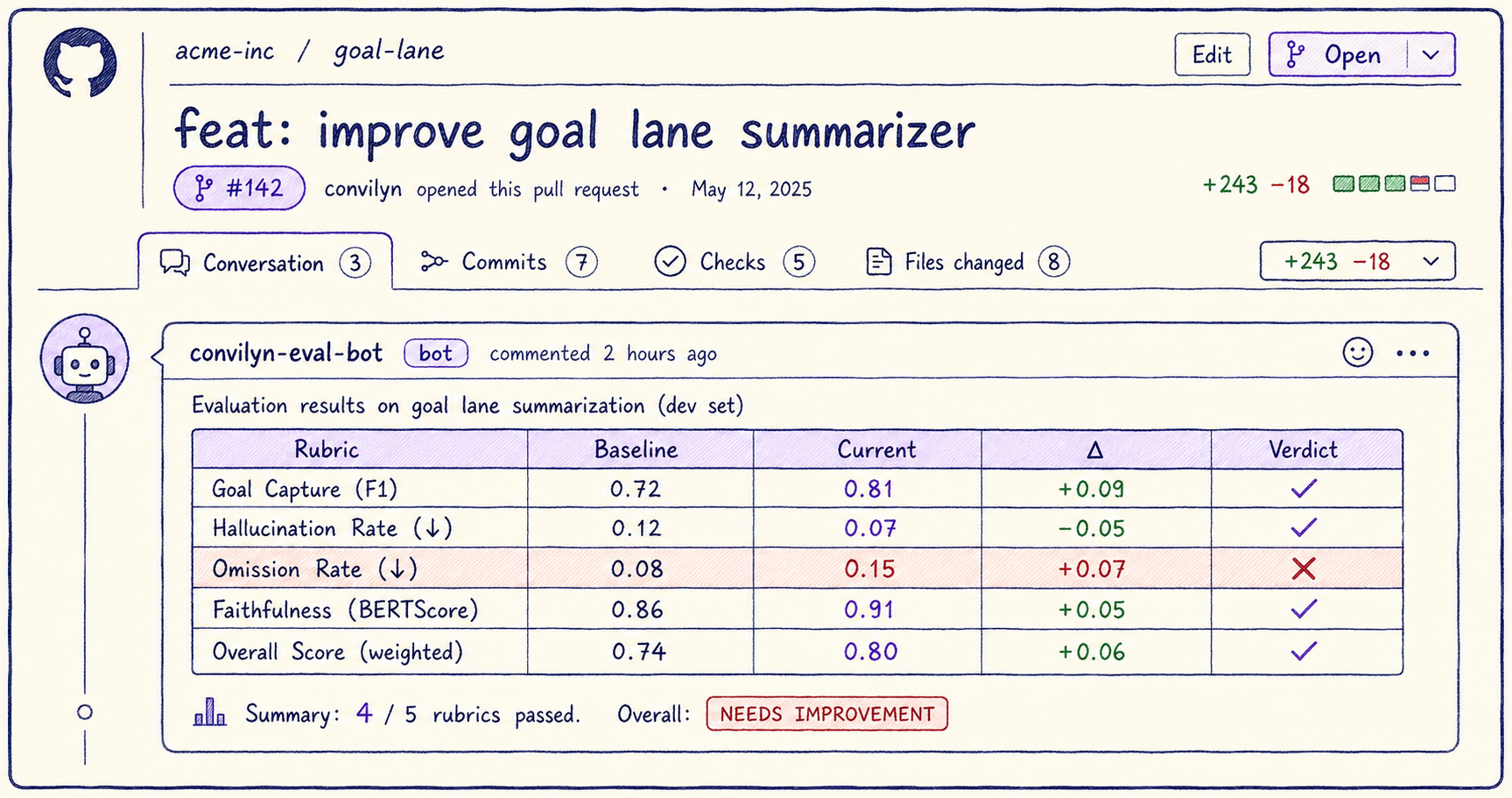

The PR Comment Robot

The eval verdict is useless if it lives in a CI log file nobody opens. The mechanism that closes the loop is a GitHub Action that posts a structured comment on every PR with the eval results.

# PR comment format (rendered as markdown by the bot)

## convilyn-eval-bot

**Verdict: HARD_FAIL** on workflow `resume_to_jobs`

| Rubric | Baseline | Current | Δ | Verdict |

|---------------|----------|---------|--------|---------|

| groundedness | 0.91 | 0.87 | -0.04 | ❌ hard-fail |

| factuality | 0.94 | 0.95 | +0.01 | ✅ pass |

| brevity | 0.82 | 0.75 | -0.07 | ⚠️ soft-fail |

Failing cases: 3 / 50

[Open detailed report →](https://eval-reports.convilyn/run/e8a7c9.html)

To accept the regression intentionally:

```

poetry run python -m eval.cli run --workflow=resume_to_jobs --update-baseline

```The comment is rewritten on every push — the bot looks for an existing comment from itself and edits it in place rather than spamming new comments. Reviewers see the most recent verdict at the top of the PR, with a link to the full report (an HTML file with side-by-side input/output for every failing case). The gate's verdict appears in the GitHub status check; the comment is the human-readable elaboration.

This shape is similar to what LangSmith and Braintrust do as hosted products — both will post eval verdicts on PRs, both display rubric diffs. We chose to build it in-house because the eval tied so closely to our own rubric registry and trace IDs (which both stay inside the observability charter from Beta v0.1) that the integration cost of a hosted tool was higher than the build cost. If we were starting fresh today and didn't have the observability seam already in place, the calculus would tip the other way.

LLMJudge and the Groundedness Rubric

The actual scoring of "is this output good" is done by an LLM-as-judge. The class is small — about 80 lines — but the prompt structure inside it is where most of the design lives.

# app/evaluation/llm_judge.py — simplified

class LLMJudge:

rubric_name: str

model: str = "claude-haiku-4-5"

temperature: float = 0.0

max_tokens: int = 500

def render_prompt(self, input_text: str, output_text: str) -> str:

return JUDGE_PROMPT_TEMPLATE.format(

rubric=self.rubric_definition,

anchors=self.scoring_anchors, # 0.0-1.0 with concrete examples

input=input_text,

output=output_text,

)

def parse_verdict(self, raw: str) -> JudgeVerdict:

# Expects strict JSON — failures are themselves a signal we log.

return JudgeVerdict.model_validate_json(raw)The groundedness rubric is the canonical example. Its prompt instructs the judge model to read the input document, read the output, identify every distinct claim in the output, and check each one against the input. The score is the fraction of claims that have direct support. Anchored examples in the prompt ground the 1.0 / 0.5 / 0.0 boundaries — without anchors, judge scores drift over time, and inter-judge agreement drops. Zheng et al. (2023) document this drift extensively in the LLM-as-judge literature.

Two patterns we hold to firmly. The judge prompt is versioned (v1, v2...) and any change to the prompt bumps the version, invalidating all baselines that used the previous version. We don't compare scores across judge prompt versions; the comparison is only meaningful within a single prompt's behavior. And the judge model is held constant within a baseline — switching the judge model is itself a change that requires re-baselining.

The discipline here matches what Anthropic recommends in their "Develop tests" guidance: pick a strong judge model, fix the prompt, fix the temperature, fix the random seed if your model exposes one. Treat the judge as a measurement instrument that needs calibration. If the instrument changes, your readings are not comparable.

Lessons from a Year of Auto-Gated Evaluation

1. Severity is a property of the rubric, not the run. The decision about whether a regression in groundedness is a hard fail or a soft fail belongs to the rubric definition, not to the engineer running CI. If everyone gets to argue severity per-PR, you're back to manual eyeballing with extra steps. Encode severity in YAML once.

2. --update-baseline needs to be a deliberate two-step. Our first version made baseline updates implicit — if the eval passed locally, the baseline updated. This was a disaster. Engineers stopped reading the diff. The two-step pattern (run, see fail, update with explicit flag) forces a moment of human judgment. The friction is the feature.

3. Soft-fail is a habit-protection mechanism. Without a soft-fail tier, every regression is either OK or blocking. In practice, lots of regressions are minor stylistic drift that nobody wants to block on but everybody wants to see. Soft-fail solves the "I want a warning, not a wall" problem without inviting endless arguments about severity.

4. Pin the judge. The judge model and prompt are themselves a configuration that can drift. We had a regression once that turned out to be the judge model — we'd updated the haiku model behind the scenes and the new version was 3% stricter on groundedness. Pin the judge model to a specific snapshot version (claude-haiku-4-5-20251001, not claude-haiku-4-5) and treat changes as breaking.

5. The PR comment is the most important UX surface. Reviewers will not click through to a CI dashboard. They will read the comment on the PR. Spend the time to make the comment dense, scannable, and copy-pasteable. The CLI commands in the comment that show how to update the baseline are particularly load-bearing — they convert "what do I do now" into a one-line answer.

External Pointers Worth Reading

OpenAI Evals — the canonical open-source eval library. The cookbook entries on writing custom evals are short and clear. Worth reading for the registry pattern even if you don't use the library directly.

Anthropic Engineering: Evaluating Claude — the framing of "model evaluation as engineering practice" is the orientation we adopted. Read this before designing your rubric registry.

Stanford CRFM's HELM — the right reference if you want to think rigorously about which axes you're evaluating on. We don't run HELM directly; we use its taxonomy as a discipline check on our rubric set.

Zheng et al., "Judging LLM-as-a-Judge" (2023) — the foundational paper on judge model bias and calibration. Required reading before deploying any LLM-as-judge in production.

Braintrust and LangSmith — both vendors solve adjacent problems and their public docs are worth skimming for vocabulary. We didn't adopt either, but the surface area conversations they had publicly informed the shape of our in-house tooling.

If you don't gate your eval, your eval is performance art. The discipline isn't in writing more rubrics; it's in committing to a verdict that blocks merges and updating the baseline only when you mean it.

What's Next

Beta v0.3.0 covers the gate-and-baseline mechanism. Beta v0.4.0 — the next post — covers TrajectoryJudge, the slow-tier evaluator that scores not just final outputs but the entire path the agent took to get there, and the nightly auto-issue loop that turns trajectory drift into actionable GitHub issues. Output-only judges catch wrong answers; trajectory judges catch agents that take a wandering route to the right answer. Both matter, and the second is the one most teams underinvest in.