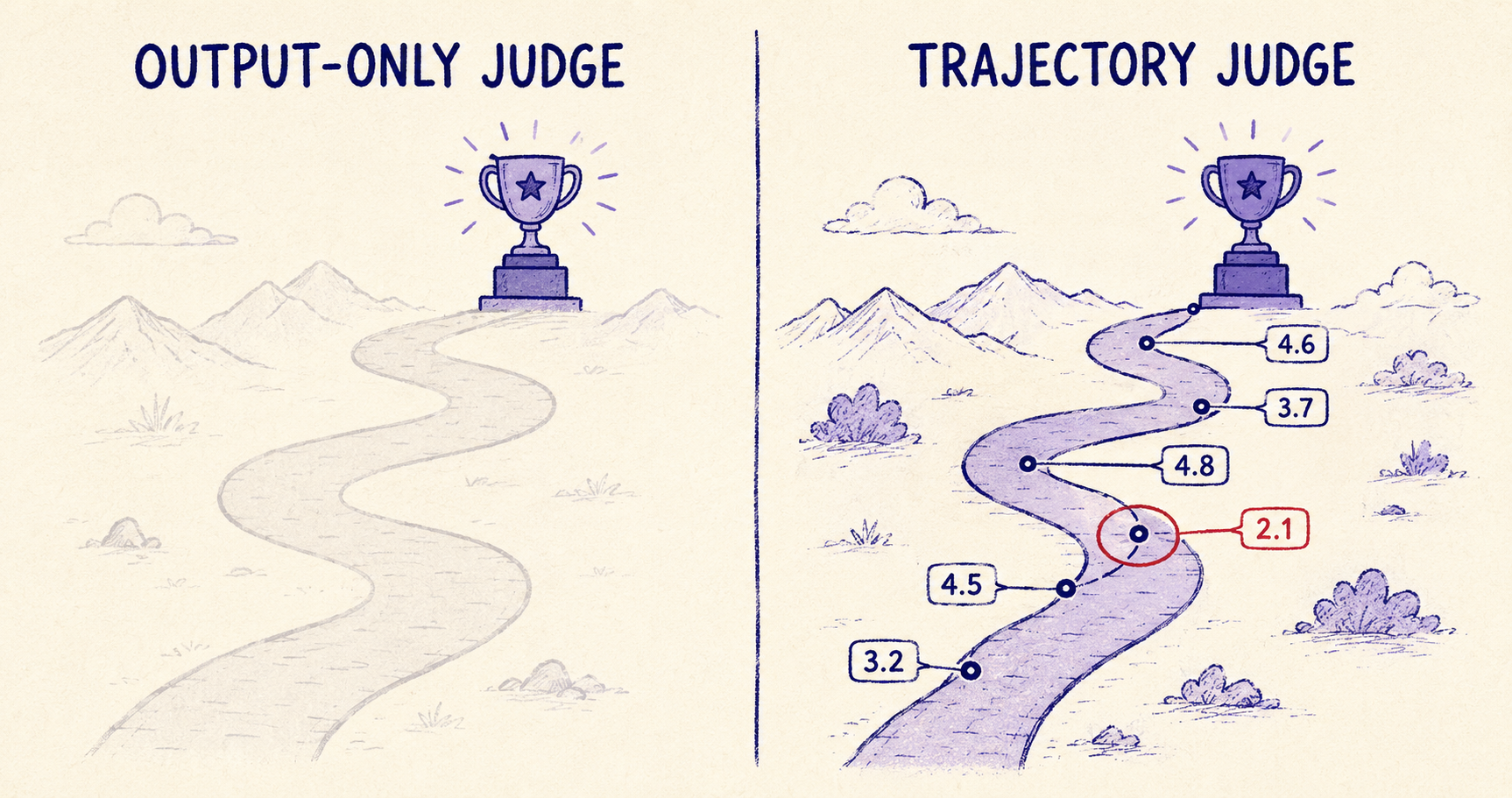

Output-only evaluation has a comfortable simplicity to it. The agent runs, the agent produces an output, you score the output. If the score is high, the agent is doing well. The framing maps cleanly onto how we evaluate models in general — give a question, score the answer, repeat. It's the natural starting point for any eval system, and it's where Beta v0.3.0 lives.

The problem is that agents are not models. A model produces an answer in one forward pass. An agent produces an answer through a multi-step trajectory: it reasons, calls tools, observes results, reasons again, possibly retries, possibly hands off to another agent, eventually emits a final response. Two agents can produce identical final outputs through wildly different trajectories. One takes three steps; the other takes thirty, hits two timeouts, retries twice, and burns 40,000 tokens to arrive at the same place. Output-only evaluation cannot tell them apart.

The trajectory matters because it correlates with cost, latency, brittleness, and — most importantly — with how the agent will behave on inputs you haven't yet tested. An agent that takes a wandering path on a known input will take a worse path on an adversarial input. Process matters because process generalizes. This is the orientation that Anthropic's Constitutional AI work takes when they evaluate self-correction trajectories rather than only final outputs, and it's the orientation the process-reward-model literature (notably Lightman et al. 2023) takes when they argue that step-level feedback is the right substrate for reasoning.

This post walks through TrajectoryJudge: how the prompt is structured, how per-step scoring is gated, how the nightly job loop turns drift into GitHub issues, and where the design still doesn't fully solve the problem.

The Trajectory Blind Spot

The clearest example we ran into was a resume-tailoring workflow. Given a resume and a job description, the agent should extract the candidate's relevant experience, identify gaps against the job's requirements, and produce a tailored summary that emphasizes the matches.

Output-only evaluation rated this workflow at 0.91 groundedness. The summaries were factually correct. They didn't fabricate experience. Reviewers reading the outputs by hand said they looked good. The output-only judge was happy.

The trajectory told a different story. The agent was, on average, calling the resume parser tool four times per run — once on the original document, once on each of three different "alternate format" versions it generated trying to coax the parser into giving cleaner output. The parser results were nearly identical each time; the agent was fighting against an ambiguity it had injected. The final output was good. The path to it was wasteful, and on inputs where the parser actually did behave differently, the agent's pattern of repeated parsing produced inconsistent results that propagated to the final summary.

Output-only evaluation could not see this. The summary was fine. The trajectory was the problem.

TrajectoryJudge Prompt Anatomy

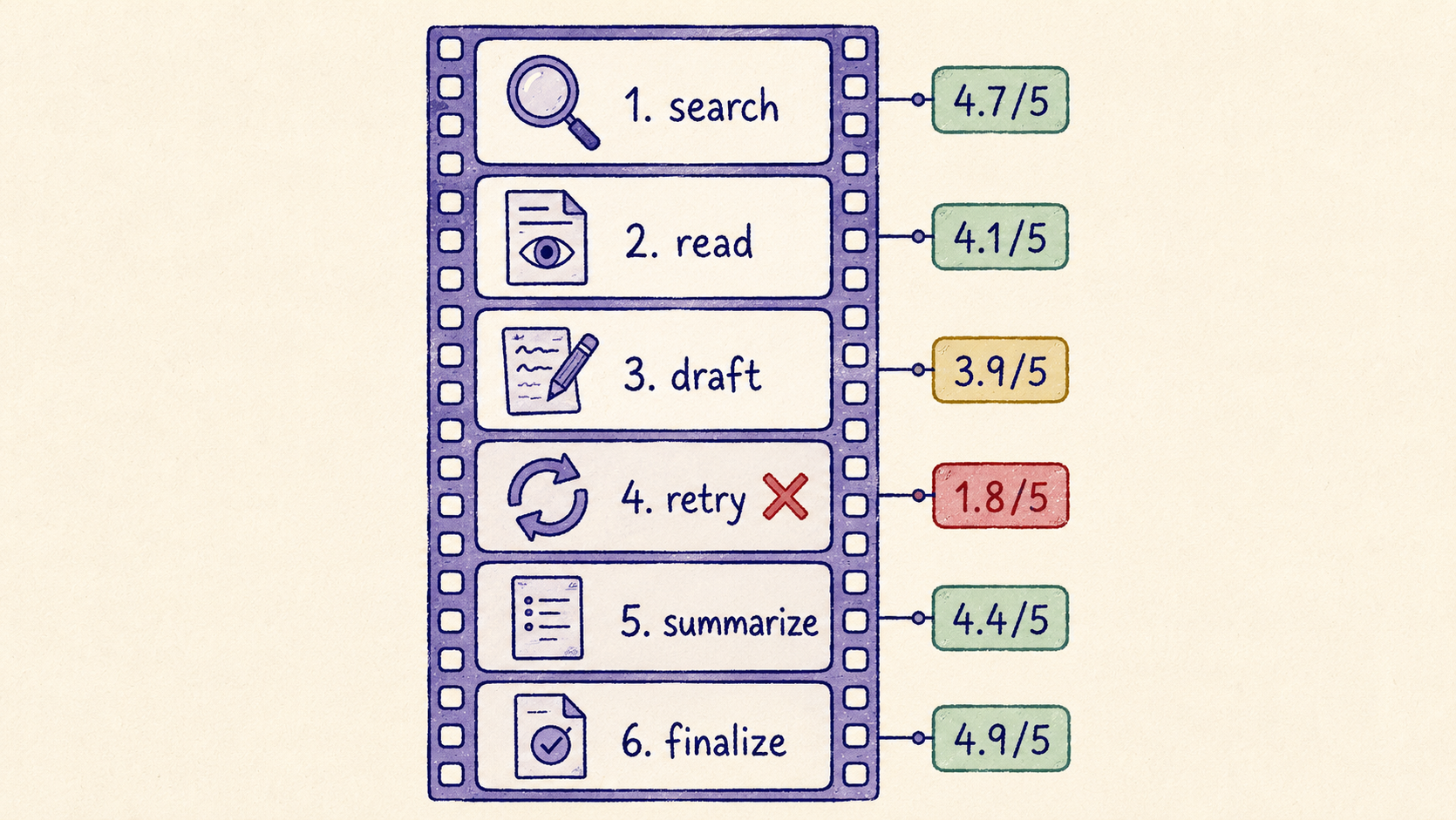

The judge prompt for trajectories is structurally different from the output-only prompt. It has to consume a full trace — input, every reasoning step, every tool call with arguments and results, every retry, every handoff — and emit a per-step verdict. The output is not a single number; it's a list of step-level scores plus an aggregate.

# Conceptual structure of the TrajectoryJudge prompt

SYSTEM:

You are evaluating an agent's reasoning trajectory.

For each step, decide whether the step was:

- PROGRESS (advanced toward the goal)

- REDUNDANT (repeated work the agent had already done)

- DETOUR (took an action unrelated to the goal)

- ERROR (called a tool incorrectly or made a wrong inference)

Anchored examples:

PROGRESS: <concrete example>

REDUNDANT: <concrete example>

DETOUR: <concrete example>

ERROR: <concrete example>

USER:

Goal: {workflow_goal}

Input: {workflow_input}

Trajectory:

Step 1: reasoning="..." tool="extract_resume" args=<...> result=<...>

Step 2: reasoning="..." tool="extract_resume" args=<...> result=<...>

Step 3: reasoning="..." tool="match_skills" args=<...> result=<...>

...

ASSISTANT (expected JSON):

[

{"step": 1, "category": "PROGRESS", "score": 0.95, "explanation": "..."},

{"step": 2, "category": "REDUNDANT", "score": 0.10, "explanation": "..."},

{"step": 3, "category": "PROGRESS", "score": 0.92, "explanation": "..."},

...

]Two design choices are load-bearing here. First, the per-step categories are fixed (PROGRESS / REDUNDANT / DETOUR / ERROR) rather than continuous scores. The judge model is much better at categorical judgments than at fine-grained numeric calibration; the LLM-as-judge literature documents this consistently. We let the judge assign a numeric score within each category for soft signal, but the category drives the gate verdict.

Second, the judge sees the full trajectory at once, not step-by-step in isolation. Evaluating a step requires knowing what the agent had already done. A call to extract_resume is PROGRESS the first time and REDUNDANT the second time — context determines the verdict. Some research approaches use independent step-by-step evaluation (the original PRM800K dataset, for example, scores each step in isolation), but for our use case the contextual evaluation produces dramatically more accurate verdicts. The cost is longer prompts and more expensive judge calls; we accept that and run the trajectory judge on the slow tier from Beta v0.3.0.

Per-Rubric Gate Thresholds

Once you have per-step scores, you have to decide what to gate on. The temptation is to compute one aggregate ("trajectory quality") and gate on it. We tried this; it didn't work. The aggregate hides the same way agent-level latency hides tool-level pathology in Beta v0.2.0.

Instead we have per-rubric gates that key off the categorical breakdown:

| Rubric | Gate Condition | Severity |

|---|---|---|

no_redundant_calls | ≤ 1 REDUNDANT step per trajectory | soft-fail |

no_detours | 0 DETOUR steps | hard-fail |

no_errors | 0 ERROR steps | hard-fail |

step_efficiency | Trajectory length within 1.3× baseline median | soft-fail |

recovery | Every ERROR step is followed by a recovery step | hard-fail |

The recovery rubric is the one that surprised us. Pre-charter, we had no signal for "the agent made a mistake but recovered" versus "the agent made a mistake and the mistake propagated." Both look the same in output-only metrics if the final output happens to be acceptable. The trajectory judge can distinguish them: an ERROR followed by a PROGRESS step that explicitly addresses the error is a healthy recovery; an ERROR followed by more PROGRESS steps that ignore the error is a silently-corrupted trajectory. The latter is a serious problem because it predicts how the agent will behave on harder inputs where the lucky save is less likely.

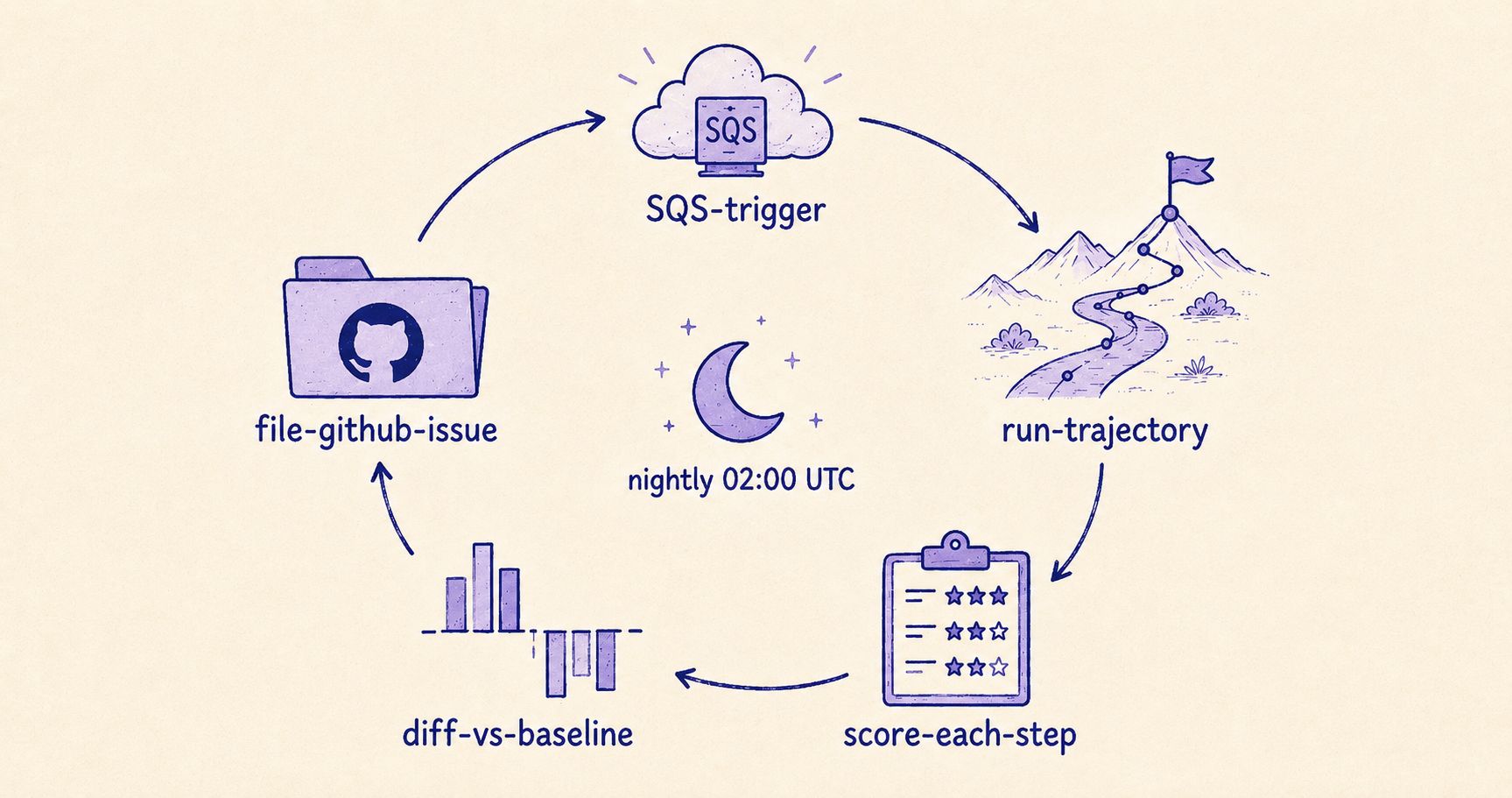

Per-rubric gates plug into the same baseline-and-gate machinery from Beta v0.3.0. The verdict for the trajectory rubrics rolls up into the same PR comment. --update-baseline works the same way. The only operational difference is that trajectory eval is too expensive for every PR — it runs nightly on the full corpus, and on demand when an engineer comments /eval-deep on a PR.

The Nightly Auto-Issue Loop

The nightly job is the part that closes the feedback loop without human intervention. Every night at 02:00 UTC, a Lambda fires that:

- Pulls the latest

maincommit's eval corpus (50 cases per workflow, 8 workflows). - Replays each case against the current production prompts.

- Runs

TrajectoryJudgeon every replay. - Diffs the per-step verdicts against the trajectory baseline.

- If any rubric hard-fails, opens a GitHub issue with the reproduction harness embedded.

- If an issue for the same workflow + rubric already exists and is open, the new finding is added as a comment.

# Auto-issue body (excerpted)

## Trajectory drift detected: workflow `resume_to_jobs`

**Failing rubric:** `no_redundant_calls` (hard-fail)

**Cases failing:** 7 of 50

Case 03 trajectory:

- Step 1: extract_resume(file_id=abc) → PROGRESS

- Step 2: extract_resume(file_id=abc, force_reparse=True) → REDUNDANT (0.05)

Judge note: agent re-ran the same parser on the same file with no new information.

- Step 3: extract_resume(file_id=abc, parser="alt") → REDUNDANT (0.10)

- Step 4: match_skills(...) → PROGRESS

**Reproduction:**

```

poetry run python -m eval.cli replay \\

--workflow=resume_to_jobs --case-id=03 --judge=trajectory

```

This issue was filed by the nightly eval loop. Acknowledge by either:

1. Fixing the regression and pushing to main (issue auto-closes on next nightly green).

2. Updating the trajectory baseline if the regression is intentional:

`poetry run python -m eval.cli baseline update --workflow=resume_to_jobs --judge=trajectory`The auto-issue is structured to be immediately actionable. The reproduction command is exact. The trajectory excerpt shows the smoking gun. The two acceptance paths — fix the regression or update the baseline — are spelled out. An on-call engineer can triage the issue without leaving GitHub; if the fix is straightforward they can branch from main, run the reproduction locally, and have a PR up in fifteen minutes.

The deduplication logic is important. Without it, a regression that affects three workflows would file three separate issues, and a regression that persists for a week would file seven issues per affected workflow. The dedup key is (workflow, rubric, judge_prompt_version). New occurrences of an existing dedup key append as comments to the existing issue rather than creating new issues. This keeps the issue tracker readable and lets the issue's history be a chronological record of how a regression evolved.

How to Read a TrajectoryJudge Verdict

A trajectory judge verdict is denser than an output-only score. The triage skill is in reading it correctly. Three patterns to look for:

The whip. The trajectory has one or two REDUNDANT steps in the middle. The final output is fine. This usually means the agent encountered an ambiguity it didn't know how to handle and tried the same approach twice with slight variations. The fix is usually in the tool's API or the prompt's tool description — the agent isn't getting clear enough feedback the first time to know it shouldn't try again.

The detour. One step in the middle of the trajectory is categorized DETOUR, and the agent recovers in the next step. The fix is almost always a misleading tool description that suggested the wrong tool was relevant. Anthropic's tool-description guidance emphasizes specificity for exactly this reason; vague descriptions invite detours.

The cascade. An ERROR step is not followed by a recovery; it's followed by more PROGRESS steps that build on the error. The final output may even look fine, but it's load-bearing on a wrong inference. This is the verdict pattern that most deserves human attention. Cascading errors are how agents produce confidently-wrong final outputs that look correct to a human reviewer skimming.

Triage rule of thumb: whips and detours are usually one-line fixes (tighten a tool description, add a parameter to disambiguate). Cascades require deeper investigation because they suggest the agent's reasoning is failing in a structured way that will recur on similar inputs.

Open Problem: Judge-of-Judges

The trajectory judge is itself an LLM, which means it can be wrong. It can mis-categorize a step. It can be biased toward longer trajectories (verbose explanations look like more PROGRESS). It can have systematic blind spots that we don't notice because we're using it to find blind spots.

The LLM-as-judge literature calls this judge calibration: how do you know your judge is accurate? The straightforward answer is to validate the judge against human-labeled trajectories — sample 200 cases, label them by hand, measure the judge's agreement rate. We do this quarterly, and our current trajectory judge agrees with human labelers about 84% of the time on category and within 0.1 of the human score 91% of the time.

The harder question is what to do when the judge model itself improves. The Anthropic and OpenAI model families both improve their reasoning capability roughly every six months. A trajectory judge built on Haiku 4.5 will be different from one built on Haiku 5.0 even if the prompt is identical. The PRM800K paper hits the same problem from the training side; their solution is to retrain on fresh human labels every time the underlying model changes meaningfully.

We don't have a fully principled solution. The current discipline is: pin the judge model snapshot, version the prompt, label 200 cases against any new snapshot before adopting it, and accept that calibration drift is one of the costs of running an evolving judge. This is likely the largest open problem in our eval stack today, and it's the reason we tag every EvaluationEvent with both judge.model and judge.prompt_version — so a year from now we can audit which snapshot of which judge produced which verdict, and re-evaluate if needed.

Five Lessons from Adding Trajectory Eval

1. Trajectory eval and output eval answer different questions. Output eval asks "is the answer correct?" Trajectory eval asks "did the agent reason well?" Both matter. Don't try to collapse them into one number; they will outvote each other in confusing ways.

2. Categorical step verdicts beat continuous step scores. Judges are better at PROGRESS / REDUNDANT / DETOUR / ERROR than at "score this step from 0 to 1." Use categories for the gate; let the judge produce numeric soft signals within each category if you want them.

3. Recovery is its own rubric. The pattern of "made an error, recovered" versus "made an error, didn't recover" is the most predictive signal for how the agent will behave on harder inputs. Make recovery a first-class rubric.

4. Auto-issue beats auto-alert. A Slack ping that says "trajectory eval failed" is a momentary distraction. A GitHub issue with a reproduction command is a piece of work that survives the next standup. The action surface matters as much as the detection.

5. Calibrate the judge quarterly. A trajectory judge is a measurement instrument. Instruments need calibration. Sample 200 cases, label them by hand, measure agreement. The cost of doing this is real; the cost of not doing it is subtle drift that erodes trust in the gate.

External Pointers Worth Reading

Lightman et al., "Let's Verify Step by Step" (OpenAI, 2023) — the foundational paper on process reward models. Worth reading even if you're not training a PRM, because the framing of "step-level supervision generalizes better than outcome-level" is the right way to think about why trajectory eval matters.

Anthropic, Constitutional AI: Harmlessness from AI Feedback — the patterns for self-correction and step-level critique that inform much of what we do in TrajectoryJudge. The recursive structure of "evaluate the evaluation" is also addressed.

Liu et al., AgentBench (2023) — the canonical multi-step trajectory benchmark. Useful for vocabulary even if you're not running it directly. The eight task categories give a structured way to think about what kinds of trajectories your agents are emitting.

Zheng et al., "Judging LLM-as-a-Judge" (2023) — required reading on judge calibration and bias. The position-bias and verbosity-bias findings are directly applicable to TrajectoryJudge prompts.

OpenAI Cookbook entries on trajectory evaluation — practical patterns for structuring multi-step eval, including the JSON output schema we adopted.

The agent that arrives at the right answer the wrong way is a regression you cannot afford to ship. Trajectory evaluation is how you see the path; the auto-issue loop is how you make it the system's responsibility to fix it.

What's Next

Beta v0.4.0 closes the evaluation arc. The next post — Beta v0.5.0 — pivots to the user-facing side of the platform: the Workflow Builder. When users get to fork and remix workflows, every prompt-injection paper you've ever read becomes a production concern. The two-layer prompt architecture we settled on is what lets us give users that flexibility without giving them the system prompt. The next post explains how, why "Layer B is data, never instruction" became a hard rule, and what we still don't fully solve.