For most of the goal-lane agent's life, the prompt that ran it was a single 253-line YAML — about 7,800 characters of system text — that asked one LLM to be ten different things at once. It defined the agent's identity, declared its locale rules, enumerated its tool roster, explained the workflow's phase sequencing, formalised three different conventions for reference IDs (yes, three — all subtly contradictory), described what made an artifact acceptable, what made content unsafe, when to refuse, and how to make decisions. It worked. It also drifted, contradicted itself, and turned every edit into a roll of the dice.

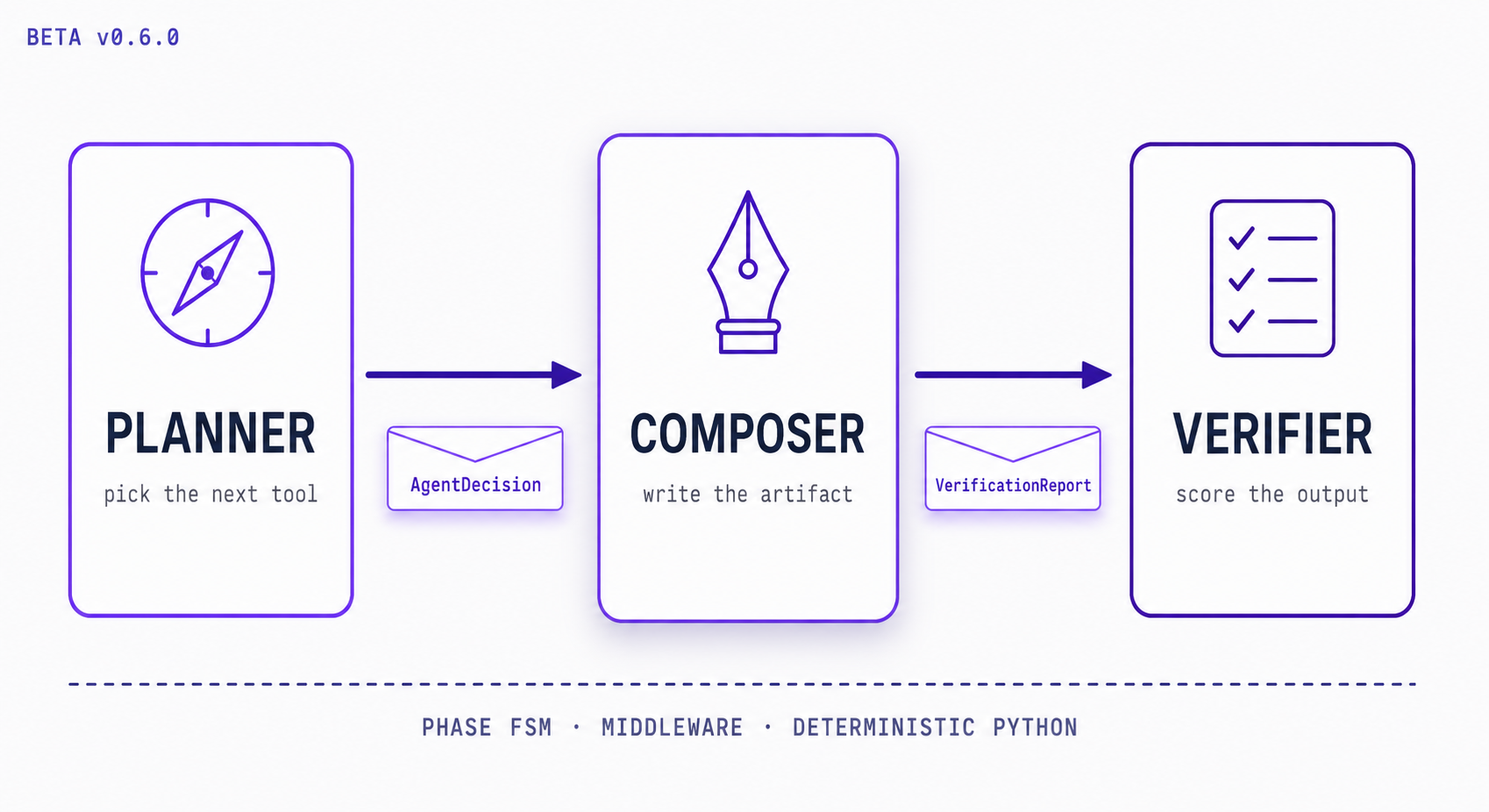

The refactor we shipped this month replaces that monolith with a chain. There are now three LLMs in the loop: a Planner that picks the next tool, a Composer that writes the artifact when a composition phase calls for it, and a Verifier that scores the output against the spec. Between them sit small pieces of deterministic Python — middleware that says "no, that tool isn't legal in this phase," a validator that says "no, that artifact isn't ready for S3" — and a typed envelope that says, at each turn, exactly what the agent decided to do next.

This post is about the chain. Not about the multi-PR journey to get there — that lives in the engineering docs — but about the shape itself: what each stage does, what flows between them, and why the load-bearing part is not any individual prompt but the contract between the links. If you're building an LLM application and you've started to feel your system prompt growing past 100 lines, this is what we wish someone had told us at line 50.

The Monolith Was the Bug

The single-prompt agent had a particular failure mode that took us a while to name. It wasn't that the agent was wrong — it was right most of the time. It was that the agent was inconsistently right, and the inconsistencies tracked with which corner of the prompt the model had decided to take seriously that day. A change that helped the agent honour the artifact-quality rules would quietly cause it to forget the phase order. A clarification of the refusal pattern would loosen its tool-selection discipline. The prompt had become a system of springs: push down on any one of them and another popped up.

When we sat down to audit, we found three contradictions in the rules for the same identifier convention, in different blocks of the same file. We found a section header — Artifact Quality Rules (CRITICAL) — repeated word-for-word in two places, with subtly different wording in each copy. We found the phase ordering written as prose: "First do A, then do B, but if X has happened, consider C." That's a finite-state machine encoded as instructions to a probabilistic model. The model was guessing the next state from natural language, every turn, every time. The wonder was that it ever guessed right.

The wider problem is what the literature on prompt design has been quietly saying for a while: a long, ambitious system prompt is a single point of failure with no test surface. You cannot unit-test a paragraph of prose. You cannot regression-gate "be helpful and decisive but also cautious." The only way to know whether a one-line change to a 253-line prompt has broken something is to run the whole thing end to end and pray your eval suite catches it. We had an eval suite. It didn't.

The Chain We Settled On

The architecture we settled on is three LLMs in series, each with one job, each invoked from its own short prompt. The job split lines up with the three things our agent actually has to do at every turn: decide what to do next, do it, and check that the result is good.

Planner. Given the conversation so far, the current phase of the workflow, and which slots have been filled, choose the next tool to call. The Planner's output is structured — a tool call, with arguments, from a phase-legal set. It does not write artifacts. It does not score outputs. It picks the next move.

Composer. Conditionally invoked when the current phase is a composition phase. Given the artifact specification, the relevant inputs, and a small set of composer directives (minimum length, required sections, forbidden patterns), produce the artifact content. The Composer does not call other tools. It writes.

Verifier. Given the produced artifact and the spec's quality criteria, return a structured verification report — a score from one to ten, and a short feedback string explaining what's wrong if anything is. The Verifier is bound to its output schema via the LLM provider's structured-output mode; the result is a Pydantic model, not a JSON blob hand-parsed out of a markdown fence.

# The chain at a glance

START → reason → [planner | composer]

│

├── call_tool → verify → reason (retry loop, max 3)

│ └── finish → END

└── request_input ↺ resume

The reason node is the runtime selector. It looks at the current phase and dispatches to either the Planner or the Composer — never both in the same turn. If the phase is a composition phase and the Planner has just decided to call store_artifact, the Composer is invoked to produce the content before the tool dispatch. Otherwise, the Planner's chosen tool call goes straight to dispatch. After dispatch, if the call was an artifact store, the Verifier runs on the persisted content and either accepts the result or feeds its critique back into the next Planner turn as a tool-message. The retry loop is bounded — three strikes and the agent transitions to a terminal state with a clear reason — not infinite.

What we did not do is route the LLMs through a graph node each. The chain is wired inside a single reasoning node, with a small strategy object dispatching to the right LLM at the right time. We considered the more visible alternative — a node per stage — and rejected it because it would have forced every workflow to pay the composition cost (a second LLM call) on every turn, including turns that don't write anything. The conditional dispatch costs nothing on Planner-only turns and adds exactly one LLM call on composition turns. Bedrock's prefix cache absorbs most of the system-prompt token cost on the second call. The bill is lower than the topology change made it look.

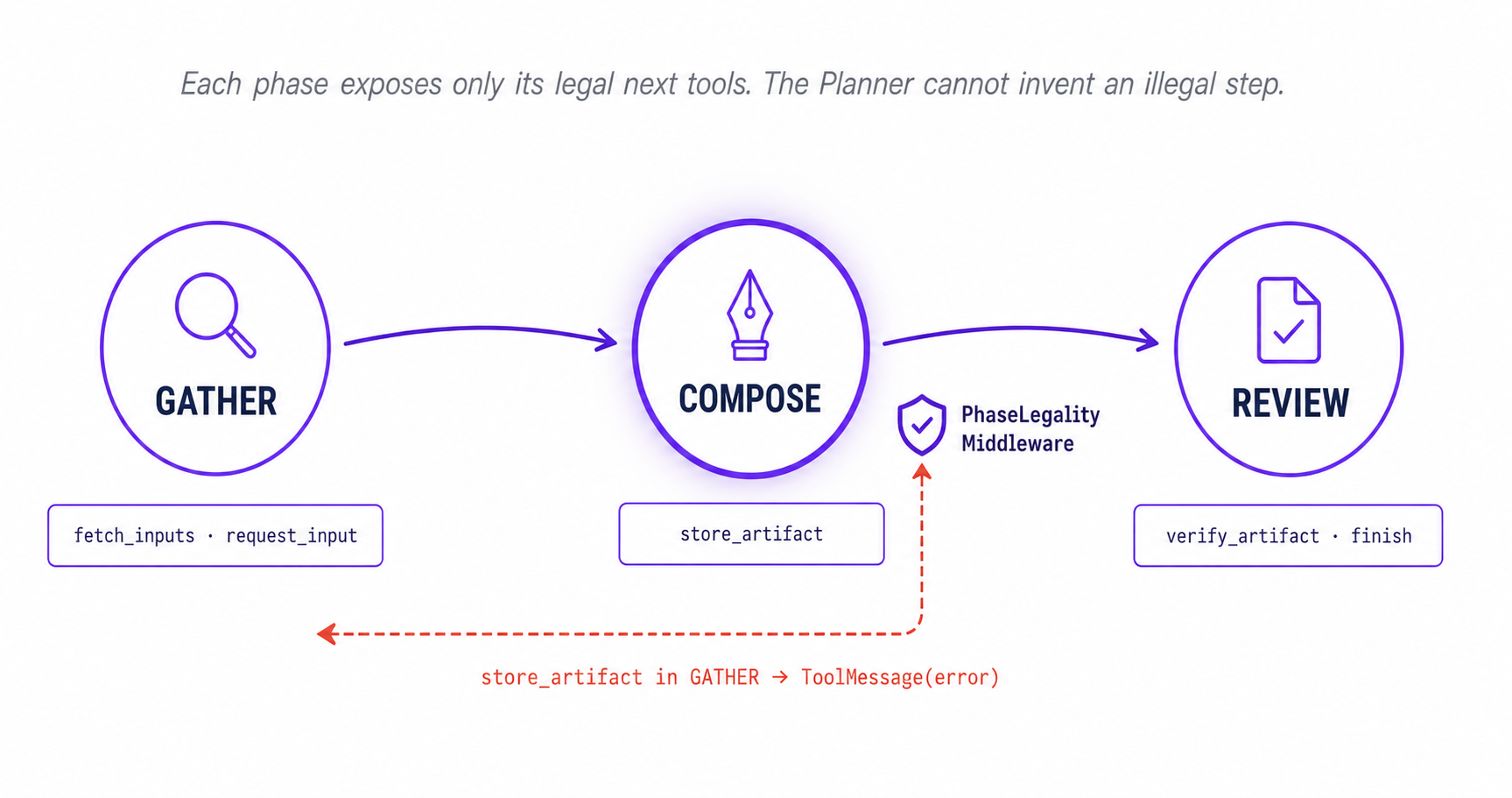

The Substrate: Phase FSM, Not Prose Persuasion

The chain only works because the things that happen between the hops are not negotiable. The Planner is allowed to pick the next tool, but it is not allowed to pick any tool. Each phase of the workflow has a small, explicit set of legal next tools, and the Planner is bound to that set via the tool-choice mechanism on the LLM call. A workflow phase that says "right now you may either call fetch_inputs or request_input_from_user" presents the Planner with exactly those two tools. The LLM cannot decide to call store_artifact just because that's how the prompt seems to be trending.

Even with tool-choice binding, a sufficiently confused Planner can still misroute — for example by passing arguments that don't match the phase's intent. So the binding is backed by a middleware that re-validates legality at the dispatch site:

class PhaseLegalityMiddleware:

"""Reject tool calls the current phase does not allow.

Runs before the tool dispatcher. A rejection produces a tool-message

that the reasoning loop sees on its next turn, prompting a retry

inside the legal set.

"""

def before_dispatch(self, call: ToolCall, phase: Phase) -> ToolMessage | None:

if call.name not in phase.valid_next_tools:

return ToolMessage.error(

f"Tool {call.name!r} is not legal in phase {phase.id!r}. "

f"Legal tools here: {sorted(phase.valid_next_tools)}."

)

return NoneThe middleware is twenty lines of Python. It does what the prompt used to do, only it does it deterministically, terminally, and with a clear error envelope. When the older prose-mode prompt said "always call fetch_inputs first," it was making a request the model could ignore in 1-in-200 turns. When the middleware says it, it makes a guarantee the dispatcher enforces in 200-in-200 turns. The same logic applies to the artifact-content shape, the per-phase iteration budget, and the high-risk-tool approval gate. Each of these used to be a paragraph in the prompt. Each is now a small piece of typed Python.

This is the part of the chain that took us longest to convince ourselves of, and that we now think is the most important takeaway. The substrate between hops is the chain's load-bearing structure. The prompts can be short because the substrate is doing the work the prompts used to pretend to do.

The Contract Between Links: AgentDecision

Two LLM calls in sequence is not yet a chain. A chain has a contract — a defined object that flows between the stages, that downstream stages can read without having to re-interpret upstream output. For us, that object is the AgentDecision envelope. Every time the reasoning node runs, the output is normalised into one:

class AgentDecision(BaseModel):

next_action: Literal[

"call_tool",

"ask_user",

"final_answer",

"human_review",

"fail_safe",

]

tool_name: str | None = None

tool_args: dict | None = None

confidence: float # 0..1

risk_level: Literal["low", "medium", "high"]

success_criteria_met: boolFor Planner turns produced by a recent-vintage spec, the LLM is bound to AgentDecision directly via structured output and returns one natively. For legacy specs that haven't been migrated yet, a small infer_decision() helper synthesises an envelope from the raw AIMessage — mapping the model's tool calls to next_action="call_tool" with conservative defaults for the other fields. Either way, the graph's routing logic reads state.last_decision.next_action. It does not call hasattr(msg, "tool_calls"). It does not parse markdown. It reads a typed field on a typed object.

This sounds like a small refinement and it is — until the day comes that a model upgrade changes the shape of AIMessage.tool_calls slightly, and every node in your graph that was reaching through that shape silently misroutes. We've had that day. The defensive AIMessage path still exists as a fallback for the case where the envelope synthesis itself fails, but the primary path is the typed object. If we had to redo the refactor and could keep only one PR, this is the one we'd keep.

The confidence and risk_level fields turned out to be more useful than we expected. The risk level feeds the approval-gate middleware that pauses for a human reviewer before any high-risk tool dispatch. The confidence is logged on every turn as a span attribute and is now part of the trajectory rubric the judge from Beta v0.4 evaluates. A run where the agent's confidence stays at 0.4 for nine straight turns is a run that wants supervision; we didn't know that until we had the field.

The Gate Before Persistence

The chain's most quietly catastrophic failure, before the refactor, was the stub artifact. A run would proceed plausibly through several Planner turns, arrive at the composition phase, produce a placeholder ("[TODO: write cover letter body here]"), call store_artifact, and persist that string into S3 with a signed URL. The agent would then move on, satisfied that the artifact phase was complete. The user would later open the result and find a literal "[TODO]" in their cover letter.

The problem was structural. Quality criteria were declared in the workflow spec but enforced only by an end-of-run check, after persistence. The bucket has no rejected tier. Once the stub was in S3 it was, for all production purposes, the artifact. We added a validator that fires inside the store-artifact tool — after argument validation, after reference resolution, and immediately before the S3 put:

class ArtifactQualityValidator:

"""Pre-store validator. Three input sources, priority order:

(1) placeholder allowlist (2) per-phase composer directives

(3) spec-level qa_policy.goal_criteria

"""

def validate(

self,

content: str,

directives: ComposerDirectives,

qa_policy: QAPolicy,

) -> ValidationResult:

if has_placeholders(content):

return ValidationResult.reject(reason="placeholder")

if violates(directives, content):

return ValidationResult.reject(reason="directive")

if not qa_policy.passes(content):

return ValidationResult.reject(reason="qa_policy")

return ValidationResult.accept()A rejection is not a soft warning. It surfaces as a tool error, the reasoning loop sees it, the Planner gets another turn to recompose. Three rejections in the same workflow trigger a terminal state with an explicit reason — store_artifact_loop_abort — that ends the run cleanly rather than burning more tokens chasing an artifact the model evidently cannot produce. The eval set tracks how often we hit the loop-abort path; the goal is "almost never," and the rare cases that do hit it are exactly the workflows where the spec needs revisiting, not the agent.

The interesting structural consequence of adding this validator is that the spec's qa_policy block, which had previously been read only by end-to-end test helpers, is now production load-bearing. Quality criteria stopped being aspirational. If you write them in the spec, they run on every artifact. If you don't write them, the placeholder and directive checks still fire. The spec started doing what it always claimed to do.

The Eval Set Is the Regression Gate

A chain you can't regression-test is a chain that drifts. Every prompt edit, every model-version bump, every tweak to the FSM is a potential silent regression — silent because the chain still runs, just not in the way it used to. We knew this from the prompt-monolith days, where the only way to find out a one-line edit had broken something was to ship it and watch the use-case dashboards a week later.

Per the discipline we set down in Beta v0.3, every workflow spec now ships an eval_set directory alongside it — a small, frozen set of baseline cases with expected behavioural shape. The eval runner exercises the entire chain end to end for each case and grades the result against the per-case criteria. The CI workflow runs the eval set on every PR; a regression blocks merge. Humans review the words in the prompt files; the eval-set CI reviews the behaviour they produce. Both gates have to pass.

The eval cases that catch the most regressions are not the happy-path runs. They're the boundary cases — "what does the chain do when the user provides only one of two required slots?", "what happens when the same tool is called twice in a row?", "what happens when the artifact is one character below the directive's min_chars?". These are the cases the prompt-monolith era treated as folklore — well-known to the engineer who wrote the prompt, hidden from everyone else. They're now frozen test cases that the next refactor cannot break without somebody noticing.

| Discipline | Prompt monolith | Three-stage chain |

|---|---|---|

| Responsibility per LLM | Ten concerns, one prompt | One concern per stage |

| Phase ordering | Prose: "first do A, then B…" | FSM in Python; illegal calls rejected |

| Routing input | hasattr(msg, "tool_calls") | state.last_decision.next_action |

| Verifier contract | JSON inside markdown fence, hand-parsed | VerificationReport Pydantic, structured output |

| Quality criteria | Declared in spec, enforced post-store | Declared in spec, enforced pre-store |

| Regression gate | Human review of prompt diffs | Human review + per-spec eval-set CI |

Five Lessons From Splitting a Monolith

1. One LLM per testable responsibility. If a single prompt is being asked to do more than one thing well, it is being asked to do nothing reliably. The split into Planner / Composer / Verifier was not aesthetic. Each stage now has a prompt small enough to fit on a screen, a contract small enough to unit-test, and a failure mode small enough to diagnose. The chain costs three LLM calls in the composition path; it saves multiples of that in incident-response time.

2. The substrate between hops is the chain's load-bearing structure. Make the things-that-must-be-true Python. Phase ordering is Python. Tool legality is Python. Artifact gating is Python. The prompts only have to handle the things Python cannot — the open-ended language work. When in doubt, push the rule down a layer; the prompt gets shorter and the system gets stricter.

3. Typed envelopes beat hasattr parsing. Your routing logic should not depend on what the model happened to put in tool_calls this turn. Define a AgentDecision (or your equivalent), synthesise it once per turn, and let every node downstream read typed fields. The day the underlying message shape changes — and that day is coming — your graph will reroute around the change because it never depended on the shape in the first place.

4. Gate before persistence, not after. Object storage does not have a "rejected" tier. Once your artifact lands in S3, you own it — the URL is signed, the user can click it, the bill is paid. Validate before the put. Define what makes an artifact acceptable in the spec, enforce that definition at the tool boundary, and surface rejections as retryable tool errors. A bounded retry loop with a terminal abort is cheaper than a polished-looking placeholder in production.

5. The eval set is the only thing standing between "we shipped a refactor" and "we shipped a regression you'll find at 3am." Write the eval cases before the refactor, not after. The cases that catch the most regressions are the boundary ones — the off-by-one slot, the duplicate tool call, the artifact one character short of the limit. Freeze them. Run them on every PR. Block merge on regression. The discipline is unglamorous and it pays for itself the first time it catches a regression that would otherwise have shipped.

A chain is not "two LLM calls" — it is a typed contract between stages, with deterministic control flow between them. Get the contract right and the prompts shrink. Get the substrate right and the chain stays a chain.

External Pointers Worth Reading

Anthropic, "Building effective agents" (2024) — the most useful single piece of writing on agent design we've come across. Their distinction between workflows and agents, and their walk-through of the prompt-chaining, routing, and evaluator-optimiser patterns, frames everything in this post.

Schick et al., "Toolformer: Language Models Can Teach Themselves to Use Tools" (2023) — the foundational paper for thinking about tool calls as a structured output rather than as parsed text. Still the right starting point if you're new to the literature.

LangChain, LangGraph low-level concepts — the conditional-edges and interrupts machinery our reasoning node sits on top of. Their concept docs are worth reading even if you're not on LangGraph; the vocabulary travels.

Anthropic, Tool use with Claude — the platform-level documentation for the tool-choice binding and structured-output patterns that the Planner and Verifier rely on. The tool_choice mechanism is what makes the phase-FSM substrate enforceable in practice.

Simon Willison, prompt engineering essays — the most readable continuous commentary on what is and isn't working in LLM-app design right now. If you read nothing else from this list, read this.

What's Next

The chain is the structural piece. What extends from here is mostly content: more workflow specs migrating to the Planner / Composer split, more per-domain risk-tier overrides for the approval gate, more eval-set cases for the boundary conditions we haven't named yet. The infrastructure is finished; the catalogue is what scales.

Beta v0.7 covers the file-handling story we'd originally planned for this slot — multi-format extraction, ZIP-bomb defenses, and the doc-parser MCP migration to framework v1. Less cinematic than this one, but the work behind every input the agent ever sees. We'll see you there.